Log–log plot

{{Short description|2D graphic with logarithmic scales on both axes}} {{More citations needed|log graph papers and their use|find=https://www.mathnstuff.com/math/spoken/here/2class/340/loggraf.htm|date=August 2025|name=Agnes (A2) Azzolino}} [[Image:LogLog exponentials.svg|class=skin-invert-image|thumb|A log–log plot of ''y'' = ''x'' (blue), ''y'' = ''x''2 (green), and ''y'' = ''x''3 (red).Note the logarithmic scale markings on each of the axes, and that the log ''x'' and log ''y'' axes (where the logarithms are 0) are where ''x'' and ''y'' themselves are 1.]]

[[File:Comparison of simple power law curves in original and log-log scale.png|class=skin-invert-image|thumb|Comparison of linear, concave, and convex functions when plotted using a linear scale (left) or a log scale (right).]]

In [[science]] and [[engineering]], a '''log–log graph''' or '''log–log plot''' is a two-dimensional graph of numerical data that uses [[logarithmic scale]]s on both the horizontal and vertical axes. [[Exponentiation#Power_functions|Power functions]] – relationships of the form y=ax^k – appear as straight lines in a log–log graph, with the exponent corresponding to the slope, and the coefficient corresponding to the intercept. Thus these graphs are very useful for recognizing these relationships and [[estimating parameters]]. Any base can be used for the logarithm, though most commonly base 10 (common logs) are used.

== Relation with monomials == Given a monomial equation y=ax^k, taking the logarithm of the equation (with any base) yields: \log y = k \log x + \log a.

Setting X = \log x and Y = \log y, which corresponds to using a log–log graph, yields the equation Y = mX + b

where ''m'' = ''k'' is the slope of the line ([[Grade (slope)|gradient]]) and ''b'' = log ''a'' is the intercept on the (log ''y'')-axis, meaning where log ''x'' = 0, so, reversing the logs, ''a'' is the ''y'' value corresponding to ''x'' = 1.{{Cite web |last=Bourne |first=Murray |title=7. Log-Log and Semi-log Graphs |url=https://www.intmath.com/exponential-logarithmic-functions/7-graphs-log-semilog.php |access-date=2024-10-15 |website=www.intmath.com |language=en-us}}

== Equations == The equation for a line on a log–log scale would be: \log_{10}F(x) = m \log_{10}x + b, F(x) = x^m\cdot10^b, where ''m'' is the slope and ''b'' is the intercept point on the log plot.

=== Slope of a log–log plot === [[Image:Slope of log-log plot.PNG|class=skin-invert-image|thumbnail|250px|Finding the slope of a log–log plot using ratios]] To find the slope of the plot, two points are selected on the ''x''-axis, say ''x''1 and ''x''2. Using the below equation: \log[F (x_1)] = m \log (x_1) + b, and \log[F (x_2)] = m \log(x_2) + b. The slope ''m'' is found taking the difference: m = \frac { \log (F_2) - \log (F_1)} { \log(x_2) - \log(x_1) } = \frac {\log (F_2/F_1)}{\log(x_2/x_1)}, where ''F''1 is shorthand for ''F''(''x''1) and ''F''2 is shorthand for ''F''(''x''2). The figure at right illustrates the formula. Notice that the slope in the example of the figure is ''negative''. The formula also provides a negative slope, as can be seen from the following property of the logarithm: \log(x_1/x_2) = -\log(x_2/x_1).

=== Finding the function from the log–log plot === The above procedure now is reversed to find the form of the function ''F''(''x'') using its (assumed) known log–log plot. To find the function ''F'', pick some ''fixed point'' (''x''0, ''F''0), where ''F''0 is shorthand for ''F''(''x''0), somewhere on the straight line in the above graph, and further some other ''arbitrary point'' (''x''1, ''F''1) on the same graph. Then from the slope formula above: m = \frac {\log (F_1 / F_0)}{\log(x_1 / x_0)} which leads to \log(F_1 / F_0) = m \log(x_1 / x_0) = \log[(x_1 / x_0)^m ]. Notice that 10log10(''F''1) = ''F''1. Therefore, the logs can be inverted to find: \frac{F_1}{F_0} = \left(\frac{x_1}{x_0}\right)^m or F_1 = \frac{F_0}{x_0^m} , x^m, which means that F(x) = \mathrm{constant}\cdot x^m. In other words, ''F'' is proportional to ''x'' to the power of the slope of the straight line of its log–log graph. Specifically, a straight line on a log–log plot containing points (''x''0, ''F''0) and (''x''1, ''F''1) will have the function: F(x) = {F_0}\left(\frac{x}{x_0} \right)^\frac {\log (F_1/F_0)}{\log(x_1/x_0)}, Of course, the inverse is true too: any function of the form F(x) = \mathrm{constant} \cdot x^m will have a straight line as its log–log graph representation, where the slope of the line is ''m''.

=== Finding the area under a straight-line segment of log–log plot === To calculate the area under a continuous, straight-line segment of a log–log plot (or estimating an area of an almost-straight line), take the function defined previously F(x) = \mathrm{constant}\cdot x^m. and integrate it. Since it is only operating on a definite integral (two defined endpoints), the area A under the plot takes the form A(x) = \int_{x_0}^{x_1} F(x) , dx = \left.\frac{\mathrm{constant}}{m+1} \cdot x^{m+1}\right|_{x_0}^{x_1}

Rearranging the original equation and plugging in the fixed point values, it is found that \mathrm{constant} = \frac{F_0}{x_0^m}

Substituting back into the integral, you find that for ''A'' over ''x''0 to ''x''1

\begin{align} A &= \frac{F_0/x_0^m}{m+1}\cdot (x_1^{m+1}-x_0^{m+1}) \[1.2ex] \log A &= \log \left[\frac{F_0 / x_0^m}{m+1} \cdot (x_1^{m+1}-x_0^{m+1})\right] \ &= \log \frac{F_0}{m+1} - \log \frac{1}{x_0^m} + \log (x_1^{m+1}-x_0^{m+1}) \ &= \log \frac{F_0}{m+1} + \log \left(\frac{x_1^{m+1} - x_0^{m+1}}{x_0^m}\right) \ &= \log \frac{F_0}{m+1} + \log \left(\frac{x_1^m}{x_0^m}\cdot x_1 - \frac{x_0^{m+1}}{x_0^m}\right) \end{align}

Therefore, A = \frac{F_0}{m+1} \cdot \left[x_1 \cdot \left(\frac {x_1}{x_0}\right)^m - x_0\right]

For ''m'' = −1, the integral becomes \begin{align} A_{(m=-1)} &= \int_{x_0}^{x_1} F(x) , dx = \int_{x_0}^{x_1} \frac {\mathrm{constant}}{x} , dx = \frac{F_0}{x_0^{-1}} \int_{x_0}^{x_1} \frac {dx}{x} = F_0 \cdot x_0 \cdot {\ln x }\Big|{x_0}^{x_1} \ A{(m=-1)} &= F_0 \cdot x_0 \cdot \ln \frac{x_1}{x_0} \end{align}

== Log-log linear regression models ==

Log–log plots are often use for visualizing log-log linear regression models with (roughly) [[log-normal]], or [[Log-logistic distribution|Log-logistic]], errors. In such models, after log-transforming the [[dependent and independent variables]], a [[Simple linear regression]] model can be fitted, with the errors becoming [[Homoscedasticity|homoscedastic]]. This model is useful when dealing with data that exhibits [[exponential growth]] or decay, while the errors continue to grow as the independent value grows (i.e., [[heteroscedasticity|heteroscedastic]] error).

As above, in a log-log linear model the relationship between the variables is expressed as a power law. Every unit change in the independent variable will result in a constant percentage change in the dependent variable. The model is expressed as:

:y = a \cdot x^b \cdot e^\epsilon

Taking the logarithm of both sides, we get:

:\log(y) = \log(a) + b \cdot \log(x) + \epsilon

This is a [[linear equation]] in the logarithms of x and y, with \log(a) as the intercept and b as the slope. In which \epsilon \sim \textrm{Normal}(\mu, \sigma^2), and e^\epsilon \sim \textrm{Log-Normal}(\mu, \sigma^2).

[[File:Visualizing Loglog Normal Data.png|class=skin-invert-image|thumb|Figure 1: Visualizing Loglog Normal Data]]

Figure 1 illustrates how this looks. It presents two plots generated using 10,000 simulated points. The left plot, titled 'Concave Line with Log-Normal Noise', displays a [[scatter plot]] of the observed data (y) against the independent variable (x). The red line represents the 'Median line', while the blue line is the 'Mean line'. This plot illustrates a dataset with a power-law relationship between the variables, represented by a concave line.

When both variables are log-transformed, as shown in the right plot of Figure 1, titled 'Log-Log Linear Line with Normal Noise', the relationship becomes linear. This plot also displays a scatter plot of the observed data against the independent variable, but after both axes are on a logarithmic scale. Here, both the mean and median lines are the same (red) line. This transformation allows us to fit a [[Simple linear regression]] model (which can then be transformed back to the original scale - as the median line).

[[File:Sliding Window Error Metrics Loglog Normal Data.png|class=skin-invert-image|thumb|Figure 2: Sliding Window Error Metrics Loglog Normal Data]]

The transformation from the left plot to the right plot in Figure 1 also demonstrates the effect of the log transformation on the distribution of noise in the data. In the left plot, the noise appears to follow a [[log-normal distribution]], which is right-skewed and can be difficult to work with. In the right plot, after the log transformation, the noise appears to follow a [[normal distribution]], which is easier to reason about and model.

This normalization of noise is further analyzed in Figure 2, which presents a line plot of three error metrics ([[Mean Absolute Error]] - MAE, [[Root Mean Square Error]] - RMSE, and [[Mean Absolute Logarithmic Error]] - MALE) calculated over a sliding window of size 28 on the x-axis. The y-axis gives the error, plotted against the independent variable (x). Each error metric is represented by a different color, with the corresponding smoothed line overlaying the original line (since this is just simulated data, the error estimation is a bit jumpy). These error metrics provide a measure of the noise as it varies across different x values.

Log-log linear models are widely used in various fields, including economics, biology, and physics, where many phenomena exhibit power-law behavior. They are also useful in [[regression analysis]] when dealing with heteroscedastic data, as the log transformation can help to stabilize the variance.

== Applications == [[File:2010- Decreasing renewable energy costs versus deployment.svg|class=skin-invert-image|thumb|upright=1.3|A log-log plot condensing information that spans more than one order of magnitude along both axes]] These graphs are useful when the parameters ''a'' and ''b'' need to be estimated from numerical data. Specifications such as this are used frequently in [[economics]].

One example is the estimation of [[money demand]] functions based on [[Money demand#Inventory theory|inventory theory]], in which it can be assumed that money demand at time ''t'' is given by M_t = AR_t^bY_t^cU_t, where ''M'' is the real quantity of [[money]] held by the public, ''R'' is the [[rate of return]] on an alternative, higher yielding asset in excess of that on money, ''Y'' is the public's [[real income]], ''U'' is an error term assumed to be [[log-normal distribution|lognormally distributed]], ''A'' is a [[scale parameter]] to be estimated, and ''b'' and ''c'' are [[Elasticity (economics)|elasticity]] parameters to be estimated. Taking logs yields m_t = a + br_t + cy_t + u_t, where ''m'' = log ''M'', ''a'' = log ''A'', ''r'' = log ''R'', ''y'' = log ''Y'', and ''u'' = log ''U'' with ''u'' being [[normal distribution|normally distributed]]. This equation can be estimated using [[ordinary least squares]].

Another economic example is the estimation of a firm's [[Cobb–Douglas production function]], which is the right side of the equation Q_t=AN_t^{\alpha}K_t^{\beta}U_t, in which ''Q'' is the quantity of output that can be produced per month, ''N'' is the number of hours of labor employed in production per month, ''K'' is the number of hours of physical capital utilized per month, ''U'' is an error term assumed to be lognormally distributed, and ''A'', \alpha, and \beta are parameters to be estimated. Taking logs gives the linear regression equation q_t = a + \alpha n_t + \beta k_t + u_t where ''q'' = log ''Q'', ''a'' = log ''A'', ''n'' = log ''N'', ''k'' = log ''K'', and ''u'' = log ''U''.

Log–log regression can also be used to estimate the [[fractal dimension]] of a naturally occurring [[fractal]].

However, going in the other direction – observing that data appears as an approximate line on a log–log scale and concluding that the data follows a power law – is not always valid.{{cite journal|author1=Clauset, A. |author2=Shalizi, C. R. |author3=Newman, M. E. J. |year=2009| title=Power-Law Distributions in Empirical Data| journal=SIAM Review |volume=51 |issue=4 |pages=661–703 |arxiv=0706.1062 |bibcode=2009SIAMR..51..661C| doi=10.1137/070710111|s2cid=9155618 }}

In fact, many other functional forms appear approximately linear on the log–log scale, and simply evaluating the [[goodness of fit]] of a [[linear regression]] on logged data using the [[coefficient of determination]] (''R''2) may be invalid, as the assumptions of the linear regression model, such as Gaussian error, may not be satisfied; in addition, tests of fit of the log–log form may exhibit low [[statistical power]], as these tests may have low likelihood of rejecting power laws in the presence of other true functional forms. While simple log–log plots may be instructive in detecting possible power laws, and have been used dating back to [[Vilfredo Pareto|Pareto]] in the 1890s, validation as a power laws requires more sophisticated statistics.

These graphs are also extremely useful when data are gathered by varying the control variable along an exponential function, in which case the control variable ''x'' is more naturally represented on a log scale, so that the data points are evenly spaced, rather than compressed at the low end. The output variable ''y'' can either be represented linearly, yielding a [[lin–log graph]] (log ''x'', ''y''), or its logarithm can also be taken, yielding the log–log graph (log ''x'', log ''y'').

[[Bode plot]] (a [[plot (graphics)|graph]] of the [[frequency response]] of a system) is also log–log plot.

In [[chemical kinetics]], the general form of the dependence of the [[reaction rate]] on concentration takes the form of a power law ([[law of mass action]]), so a log-log plot is useful for estimating the reaction parameters from experiment.

== See also ==

- [[Semi-log plot]] (lin–log or log–lin)

- [[Power law]]

- [[Zipf's law]]

- [[Log-linear model]]

- [[Log-normal distribution]]

- [[Log-logistic distribution]]

- [[Data transformation (statistics)]]

- [[Variance-stabilizing transformation]]

== References == {{reflist}}

{{DEFAULTSORT:Log-Log Graph}} [[Category:Logarithmic scales of measurement]] [[Category:Statistical charts and diagrams]]

[[de:Logarithmenpapier#Doppeltlogarithmisches Papier]]

Related Articles

From MOAI Insights

디지털 트윈, 당신 공장엔 이미 있다 — 엑셀과 MES 사이 어딘가에

디지털 트윈은 10억짜리 3D 시뮬레이션이 아니다. 지금 쓰고 있는 엑셀에 좋은 질문 하나를 더하는 것 — 두 전문가가 중소 제조기업이 이미 가진 데이터로 예측하는 공장을 만드는 현실적 로드맵을 제시한다.

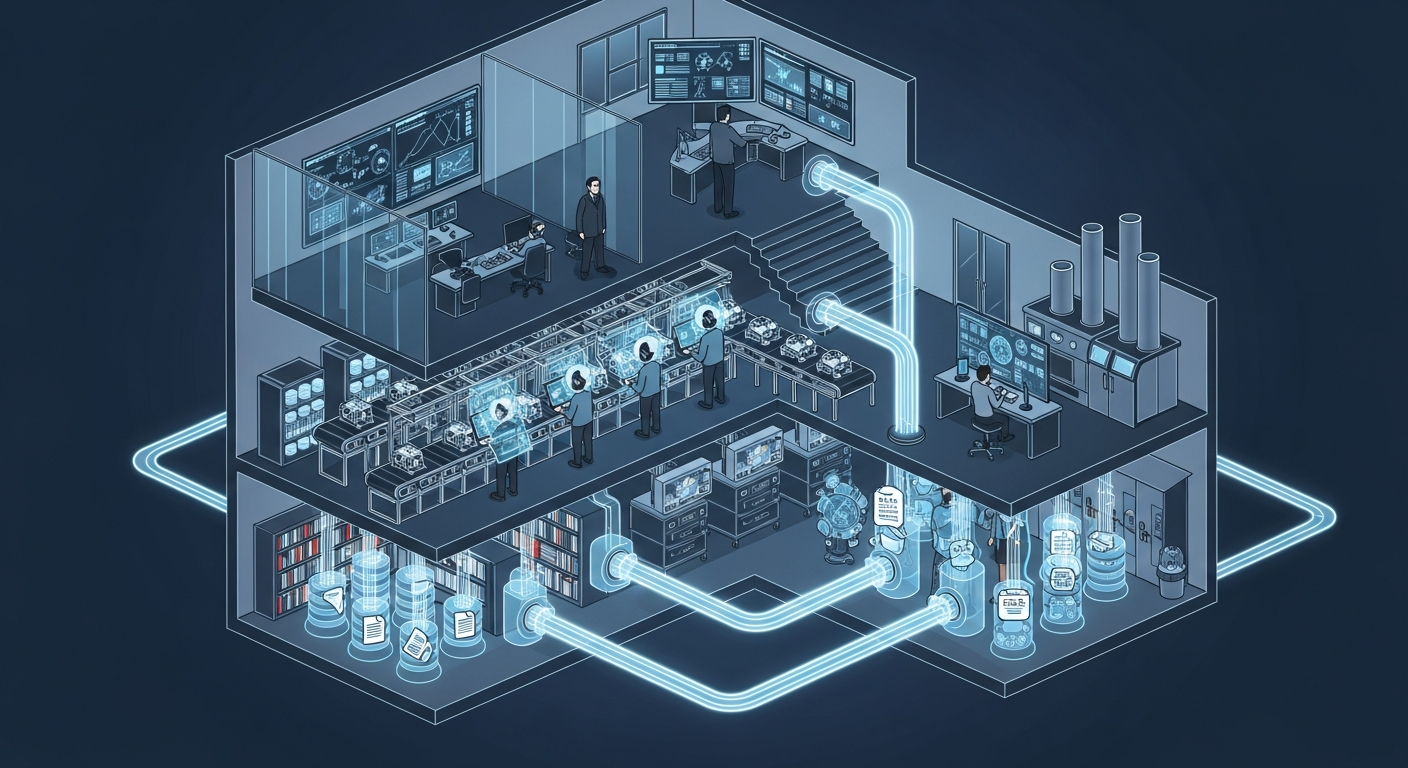

공장의 뇌는 어떻게 생겼는가 — 제조운영 AI 아키텍처 해부

지식관리, 업무자동화, 의사결정지원 — 따로 보면 다 있던 것들입니다. 제조 AI의 진짜 차이는 이 셋이 순환하면서 '우리 공장만의 지능'을 만든다는 데 있습니다.

그 30분을 18년 동안 매일 반복했습니다 — 품질팀장이 본 AI Agent

18년차 품질팀장이 매일 아침 30분씩 반복하던 데이터 분석을 AI Agent가 3분 만에 해냈습니다. 챗봇과는 완전히 다른 물건 — 직접 시스템에 접근해서 데이터를 꺼내고 분석하는 AI의 현장 도입기.

Want to apply this in your factory?

MOAI helps manufacturing companies adopt AI tailored to their operations.

Talk to us →