Scale co-occurrence matrix

'''Scale co-occurrence matrix (SCM)''' is a method for image [[feature extraction]] within [[scale space]] after [[wavelet transform]]ation, proposed by Wu Jun and Zhao Zhongming (Institute of Remote Sensing Application, [[China]]). In practice, we first do discrete wavelet transformation for one gray image and get sub images with different scales. Then we construct a series of scale based concurrent matrices, every matrix describing the gray level variation between two adjacent scales. Last we use selected functions (such as Harris statistical approach) to calculate measurements with SCM and do feature extraction and classification. One basis of the method is the fact: way texture information changes from one scale to another can represent that texture in some extent thus it can be used as a criterion for feature extraction. The matrix captures the relation of features between different scales rather than the features within a single scale space, which can represent the scale property of texture better. Also, there are several experiments showing that it can get more accurate results for texture classification than the traditional texture classification.{{cite journal|last1=Wu|first1=Jun|last2=Zhao|first2=Zhongming|title=Scale Co-occurrence Matrix for Texture Analysis using Wavelet Transformation|url=http://caod.oriprobe.com/articles/3275502/Scale_Co_occurrence_Matrix_for_Texture_Analysis_Using_Wavelet_Transfor.htm|journal=Journal of Remote Sensing|date=Mar 2001|volume=5|issue=2|page=100}}

== Background == Texture can be regarded as a similarity grouping in an image. Traditional texture analysis can be divided into four major issues: feature extraction, texture discrimination, texture classification and shape from texture(to reconstruct 3D surface geometry from texture information). For tradition feature extraction, approaches are usually categorized into structural, statistical, model based and transform.{{cite book|last1=Duda|first1=R.O.|title=Pattern Classification and Scene Analysis|isbn=978-0471223610|date=1973-02-09|publisher=Wiley |url-access=registration|url=https://archive.org/details/patternclassific00rich}} Wavelet transformation is a popular method in numerical analysis and functional analysis, which captures both frequency and location information. Gray level co-occurrence matrix provides an important basis for SCM construction. SCM based on discrete wavelet frame transformation make use of both correlations and feature information so that it combines structural and statistical benefits.

=== Discrete wavelet frame (DWF) === In order to do SCM we have to use discrete wavelet frame (DWF) transformation first to get a series of sub images. The discrete wavelet frames is nearly identical to the standard wavelet transform,{{cite journal|last1=Kevin|first1=Lund|last2=Curt|first2=Burgess|title=Producing high-dimensional semantic spaces from lexical co-occurrence|journal=Behavior Research Methods|date=June 1996|volume=28|issue=2|pages=203–208}} except that one upsamples the filters, rather than downsamples the image. Given an image, the DWF decomposes its channel using the same method as the wavelet transform, but without the subsampling process. This results in four filtered images with the same size as the input image. The decomposition is then continued in the LL channels only as in the wavelet transform, but since the image is not subsampled, the filter has to be upsampled by inserting zeros in between its coefficients. The number of channels, hence the number of features for DWF is given by 3 × l − 1.{{cite journal|last1=Mallat|first1=S.G.|title=A theory for multiresolution signal decomposition: The wavelet representation|journal=IEEE Transactions on Pattern Analysis and Machine Intelligence|volume=11|issue=7|date=1989|pages=674–693|doi=10.1109/34.192463|bibcode=1989ITPAM..11..674M|url=https://repository.upenn.edu/cgi/viewcontent.cgi?article=1703&context=cis_reports}} One dimension discrete wavelet frame decompose the image in this way:

: d_i(k) = [ [g_i]^T x], \quad (i=1,\ldots,N)

== Example == If there are two sub images ''X''1 and ''X''0 from the parent image ''X'' (in practice ''X'' = ''X''0), ''X''1 = [1 1;1 2], ''X''2 = [1 1;1 4],the grayscale is 4 so that we can get ''k'' = 1, ''G'' = 4. ''X''1(1,1), (1,2) and (2,1) are 1, while ''X''0(1,1), (1,2) and (2,1) are 1, thus Φ1(1,1) = 3; Similarly, Φ1(2,4) = 1. The SCM is as following:

{| class="wikitable" |- ! G=4 !! Gray level 0 !! Gray level 1 !! Gray level 2 !! Gray level 3 !! Gray level 4 |- | Gray level 0 || 0 || 0 || 0 || 0 || 0 |- | Gray level 1 || 3 || 0 || 0 || 0 || 0 |- | Gray level 2 || 0 || 0 || 0 || 0 || 0 |- | Gray level 3 || 0 || 0 || 0 || 0 || 0 |- | Gray level 4 || 0 || 0 || 1 || 0 || 0 |}

== External links == *{{cite book|publisher=[[IEEE]]|doi=10.1109/MMSP.1998.738911|chapter=Discrete wavelet frame representations of color texture features for image query|title=1998 IEEE Second Workshop on Multimedia Signal Processing (Cat. No.98EX175)|year=1998|last1=Tao Chen|last2=Kai-Kuang Ma|last3=Li-Hui Chen|pages=45–50|isbn=0-7803-4919-9|s2cid=1833240}} *[http://www.mathworks.com/matlabcentral/fileexchange/11727-cooccurrence-matrix co-occurrence-matrix MATLAB tutorial] *[http://www.mathworks.com/matlabcentral/fileexchange/11727-cooccurrence-matrix Co-occurrence Matrix]

== References == {{Reflist}}

[[Category:Feature detection (computer vision)]] [[Category:Wavelets]] [[Category:Image compression]] [[Category:Numerical analysis]] [[Category:Image processing software]]

Related Articles

From MOAI Insights

디지털 트윈, 당신 공장엔 이미 있다 — 엑셀과 MES 사이 어딘가에

디지털 트윈은 10억짜리 3D 시뮬레이션이 아니다. 지금 쓰고 있는 엑셀에 좋은 질문 하나를 더하는 것 — 두 전문가가 중소 제조기업이 이미 가진 데이터로 예측하는 공장을 만드는 현실적 로드맵을 제시한다.

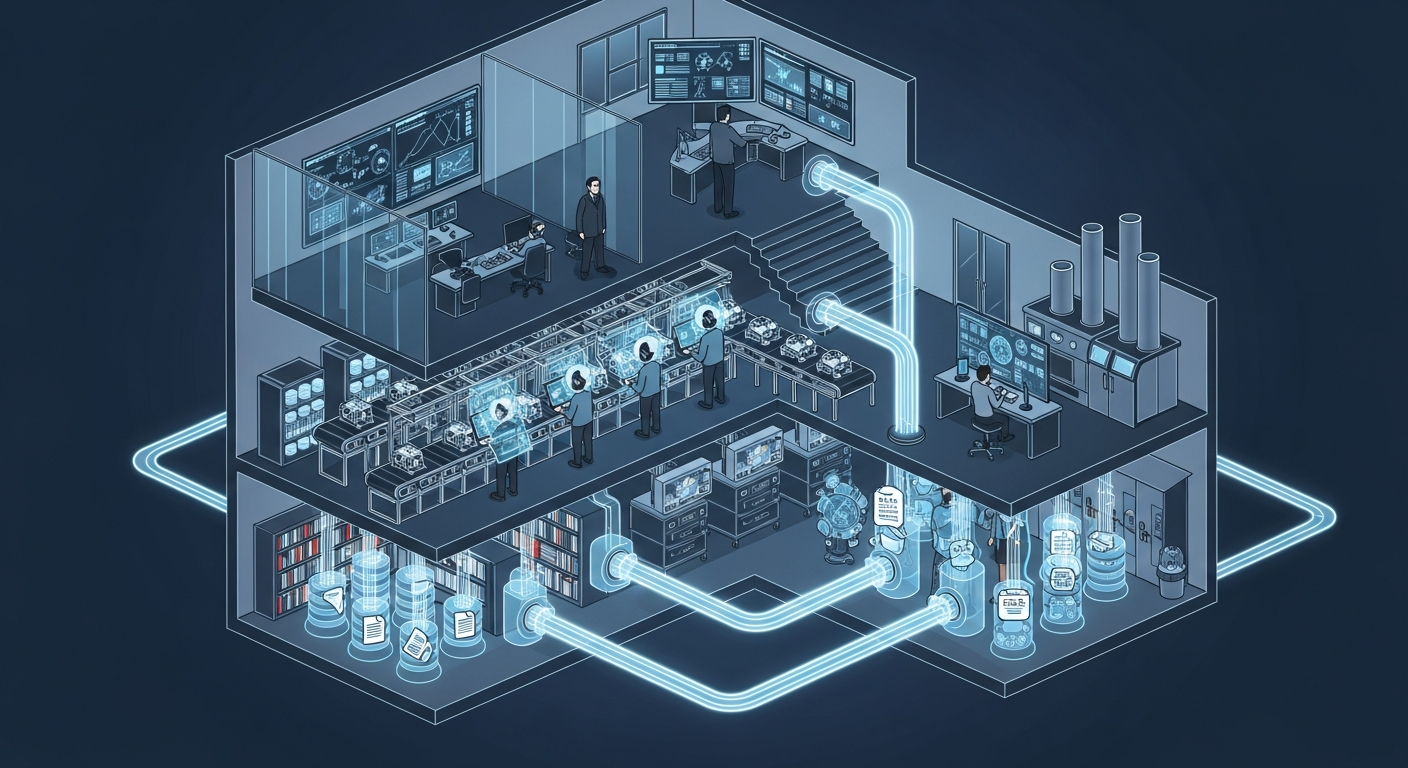

공장의 뇌는 어떻게 생겼는가 — 제조운영 AI 아키텍처 해부

지식관리, 업무자동화, 의사결정지원 — 따로 보면 다 있던 것들입니다. 제조 AI의 진짜 차이는 이 셋이 순환하면서 '우리 공장만의 지능'을 만든다는 데 있습니다.

그 30분을 18년 동안 매일 반복했습니다 — 품질팀장이 본 AI Agent

18년차 품질팀장이 매일 아침 30분씩 반복하던 데이터 분석을 AI Agent가 3분 만에 해냈습니다. 챗봇과는 완전히 다른 물건 — 직접 시스템에 접근해서 데이터를 꺼내고 분석하는 AI의 현장 도입기.

Want to apply this in your factory?

MOAI helps manufacturing companies adopt AI tailored to their operations.

Talk to us →