ADALINE

{{short description|Early single-layer artificial neural network}} [[Image:Adaline flow chart.gif|right|thumb|241x241px|Learning inside a single-layer ADALINE]] [[File:Knobby ADALINE.jpg|thumb|286x286px|Photo of an ADALINE machine, with hand-adjustable weights implemented by rheostats]] [[File:Schematic_of_adaline.png|thumb|346x346px|Schematic of a single ADALINE unit[http://www-isl.stanford.edu/~widrow/papers/t1960anadaptive.pdf 1960: An adaptive "ADALINE" neuron using chemical "memistors"]]] '''ADALINE''' ('''Adaptive Linear Neuron''' or later '''Adaptive Linear Element''') is an early single-layer [[Neural network (machine learning)|artificial neural network]] and the name of the physical device that implemented it.{{cite book |url=https://books.google.com/books?id=-l-yim2lNRUC&q=adaline+smithsonian+widrow&pg=PA54 |title=Talking Nets: An Oral History of Neural Networks |isbn=9780262511117 |last1=Anderson |first1=James A. |last2=Rosenfeld |first2=Edward |year=2000 |publisher=MIT Press }}Youtube: [https://www.youtube.com/watch?v=IEFRtz68m-8 widrowlms: Science in Action]Youtube: [https://www.youtube.com/watch?v=hc2Zj55j1zU widrowlms: The LMS algorithm and ADALINE. Part I - The LMS algorithm]Youtube: [https://www.youtube.com/watch?v=skfNlwEbqck widrowlms: The LMS algorithm and ADALINE. Part II - ADALINE and memistor ADALINE] It was developed by professor [[Bernard Widrow]] and his doctoral student [[Marcian Hoff]] at [[Stanford University]] in 1960. It is based on the [[perceptron]] and consists of weights, a bias, and a summation function. The weights and biases were implemented by [[Potentiometer|rheostats]] (as seen in the "knobby ADALINE"), and later, [[memistor]]s. It found extensive use in adaptive signal processing, especially of adaptive noise filtering.{{Cite journal |last1=Widrow |first1=B. |last2=Glover |first2=J.R. |last3=McCool |first3=J.M. |last4=Kaunitz |first4=J. |last5=Williams |first5=C.S. |last6=Hearn |first6=R.H. |last7=Zeidler |first7=J.R. |last8=Eugene Dong |first8=Jr. |last9=Goodlin |first9=R.C. |date=1975 |title=Adaptive noise cancelling: Principles and applications |journal=Proceedings of the IEEE |volume=63 |issue=12 |pages=1692–1716 |doi=10.1109/PROC.1975.10036 |issn=0018-9219}}

The difference between Adaline and the standard (Rosenblatt) perceptron is in how they learn. Adaline unit weights are adjusted to match a teacher signal, before applying the [[Heaviside step function|Heaviside function]] (see figure), but the standard perceptron unit weights are adjusted to match the correct output, after applying the Heaviside function.

A '''m'''ultilayer network of '''ADALINE''' units is known as a '''MADALINE'''.

==Definition==

Adaline is a single-layer neural network with multiple nodes, where each node accepts multiple inputs and generates one output. Given the following variables:

- \boldsymbol{x}, the input vector

- \boldsymbol{w}, the weight vector

- N, the number of inputs

- b, some bias

- o, the output of the model,

the output is:

: o=\sum_{n=1}^{N} x_n w_n + b

If we further assume that x_0=1 and w_0=b, then the output further reduces to:

: o=\sum_{n=0}^{N} x_n w_n

==Learning rule== The [[learning rule]] used by ADALINE is the LMS ("least mean squares") algorithm, a special case of [[gradient descent]].

Given the following:

- \eta, the [[learning rate]]

- o, the model output

- y, the desired target

- E=(y - o)^2, the square of the error,

the LMS algorithm updates the weights as follows:

: \boldsymbol{w} \leftarrow \boldsymbol{w} + \eta(y - o)\boldsymbol{x}

This update rule minimizes E, the square of the error,{{cite web |title=Adaline (Adaptive Linear) |url=http://www.cs.utsa.edu/~bylander/cs4793/learnsc32.pdf |work=CS 4793: Introduction to Artificial Neural Networks |publisher=Department of Computer Science, University of Texas at San Antonio}} and is in fact the [[stochastic gradient descent]] update for [[linear regression]].{{cite web|author=Avi Pfeffer |title=CS181 Lecture 5 — Perceptrons |url=http://www.seas.harvard.edu/courses/cs181/files/lecture05-notes.pdf |publisher=Harvard University }}{{dead link|date=June 2017 |bot=InternetArchiveBot |fix-attempted=yes }}

==MADALINE== MADALINE (Many ADALINE{{Cite conference|author1=Rodney Winter|author2=Bernard Widrow|year=1988|title=MADALINE RULE II: A training algorithm for neural networks|url=http://www-isl.stanford.edu/~widrow/papers/c1988madalinerule.pdf|conference=IEEE International Conference on Neural Networks|pages=401–408|doi=10.1109/ICNN.1988.23872}}) is a three-layer (input, hidden, output), fully connected, [[feedforward neural network]] architecture for [[Statistical classification|classification]] that uses ADALINE units in its hidden and output layers. I.e., its [[activation function]] is the [[sign function]].Youtube: [https://www.youtube.com/watch?v=IEFRtz68m-8 widrowlms: Science in Action] (Madaline is mentioned at the start and at 8:46) The three-layer network uses [[memistor]]s. As the sign function is non-differentiable, [[backpropagation]] cannot be used to train MADALINE networks. Hence, three different training algorithms have been suggested, called Rule I, Rule II and Rule III.

Despite many attempts, they never succeeded in training more than a single layer of weights in a MADALINE model. This was until Widrow saw the backpropagation algorithm in a 1985 conference in [[Snowbird, Utah]].{{Cite book |url=https://direct.mit.edu/books/book/4886/Talking-NetsAn-Oral-History-of-Neural-Networks |title=Talking Nets: An Oral History of Neural Networks |date=2000 |publisher=The MIT Press |isbn=978-0-262-26715-1 |editor-last=Anderson |editor-first=James A. |language=en |doi=10.7551/mitpress/6626.003.0004 |editor-last2=Rosenfeld |editor-first2=Edward}}

MADALINE Rule 1 (MRI) - The first of these dates back to 1962.{{cite journal |last1=Widrow |first1=Bernard |year=1962 |title=Generalization and information storage in networks of adaline neurons |url=https://isl.stanford.edu/~widrow/papers/c1961generalizationand.pdf |journal=Self-organizing Systems |pages=435–461}} It consists of two layers: the first is made of ADALINE units (let the output of the ith ADALINE unit be o_i); the second layer has two units. One is a majority-voting unit that takes in all o_i, and if there are more positives than negatives, outputs +1, and vice versa. Another is a "job assigner": suppose the desired output is -1, and different from the majority-voted output, then the job assigner calculates the minimal number of ADALINE units that must change their outputs from positive to negative, and picks those ADALINE units that are ''closest'' to being negative, and makes them update their weights according to the ADALINE learning rule. It was thought of as a form of "minimal disturbance principle".

The largest MADALINE machine built had 1000 weights, each implemented by a memistor. It was built in 1963 and used MRI for learning.B. Widrow, “Adaline and Madaline-1963, plenary speech,” Proc. 1st lEEE lntl. Conf. on Neural Networks, Vol. 1, pp. 145-158, San Diego, CA, June 23, 1987

Some MADALINE machines were demonstrated to perform tasks including [[inverted pendulum]] balancing, [[weather forecasting]], and [[speech recognition]].

MADALINE Rule 2 (MRII) - The second training algorithm, described in 1988, improved on Rule I. The Rule II training algorithm is based on a principle called "minimal disturbance". It proceeds by looping over training examples, and for each example, it:

- finds the hidden layer unit (ADALINE classifier) with the lowest confidence in its prediction,

- tentatively flips the sign of the unit,

- accepts or rejects the change based on whether the network's error is reduced,

- stops when the error is zero.

MADALINE Rule 3 - The third "Rule" applied to a modified network with [[Sigmoid function|sigmoid]] activations instead of sign; it was later found to be equivalent to backpropagation.{{cite journal |last1=Widrow |first1=Bernard |first2=Michael A. |last2=Lehr |title=30 years of adaptive neural networks: perceptron, madaline, and backpropagation |journal=Proceedings of the IEEE |volume=78 |issue=9 |year=1990 |pages=1415–1442 |doi=10.1109/5.58323|s2cid=195704643 }}

Additionally, when flipping single units' signs does not drive the error to zero for a particular example, the training algorithm starts flipping pairs of units' signs, then triples of units, etc.

==See also==

- [[Multilayer perceptron]]

==References==

{{reflist}}

- {{cite book |last1=Widrow |title=Adaptive Signal Processing |last2=Stearns |first2=S. D. |date=1985 |publisher=Prentice Hall |location=Englewood Cliffs, N.J.}}

==External links== *{{Cite AV media |url=https://www.youtube.com/watch?v=skfNlwEbqck |title=The LMS algorithm and ADALINE. Part II - ADALINE and memistor ADALINE |date=2012-07-29 |last=widrowlms |access-date=2024-08-17 |via=YouTube}} Widrow demonstrating both a working knobby ADALINE machine and a memistor ADALINE machine. *{{cite web|url=http://www.gc.ssr.upm.es/inves/neural/ann1/supmodel/linanet.htm |archive-url=https://web.archive.org/web/20020615161357/http://www.gc.ssr.upm.es/inves/neural/ann1/supmodel/linanet.htm |url-status=dead |archive-date=2002-06-15 |title=Delta Learning Rule: ADALINE |work=Artificial Neural Networks |publisher=Universidad Politécnica de Madrid }} *"[https://web.archive.org/web/20160916163309/http://webee.technion.ac.il/people/skva/TNNLS.pdf Memristor-Based Multilayer Neural Networks With Online Gradient Descent Training]". Implementation of the ADALINE algorithm with memristors in analog computing.

{{DEFAULTSORT:Adaline}} [[Category:Artificial neural networks]]

From MOAI Insights

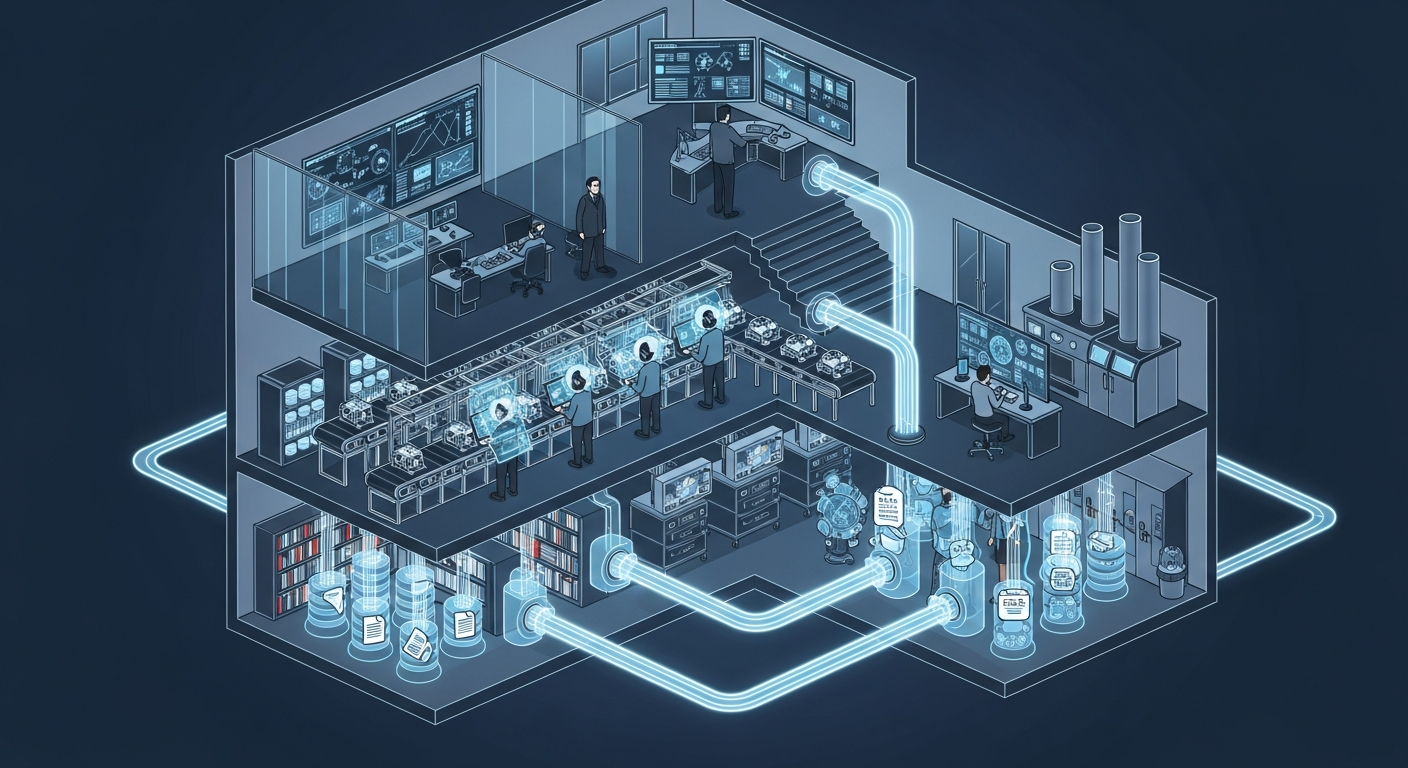

공장의 뇌는 어떻게 생겼는가 — 제조운영 AI 아키텍처 해부

지식관리, 업무자동화, 의사결정지원 — 따로 보면 다 있던 것들입니다. 제조 AI의 진짜 차이는 이 셋이 순환하면서 '우리 공장만의 지능'을 만든다는 데 있습니다.

그 30분을 18년 동안 매일 반복했습니다 — 품질팀장이 본 AI Agent

18년차 품질팀장이 매일 아침 30분씩 반복하던 데이터 분석을 AI Agent가 3분 만에 해냈습니다. 챗봇과는 완전히 다른 물건 — 직접 시스템에 접근해서 데이터를 꺼내고 분석하는 AI의 현장 도입기.

ERP 20년, 나는 왜 AI를 얹기로 했나

ERP 20년차 제조IT본부장의 고백: 3,200만 행의 데이터가 잠들어 있었다. ERP를 바꾸지 않고 AI를 얹자, 일주일 걸리던 불량 분석이 수 초로 줄었다.

Want to apply this in your factory?

MOAI helps manufacturing companies adopt AI tailored to their operations.

Talk to us →