GPU

{{short description|Specialized electronic circuit that accelerates graphics}} {{redirect|GPU}} {{for|an expansion card that contains a graphics processing unit|Graphics card}} [[File:Generic block diagram of a GPU.svg|thumb|upright=2.2|The components of a GPU.]] A '''graphics processing unit''' ('''GPU''') is a specialized [[electronic circuit]] designed for [[digital image processing]] and to accelerate [[computer graphics]], being present either as a component on a discrete [[graphics card]] or embedded on [[motherboard]]s, [[mobile phone]]s, [[personal computer]]s, [[workstation]]s, and [[video game console|game consoles]]. GPUs are increasingly being used for [[artificial intelligence]] (AI) processing due to linear algebra acceleration, which is also used extensively in graphics processing.

Although there is no single definition of the term, and it may be used to describe any video display system, in modern use a GPU includes the ability to internally perform the calculations needed for various graphics tasks, like rotating and scaling 3D images, and often the additional ability to run custom programs known as [[shader]]s. This contrasts with earlier graphics controllers known as [[video display controller]]s which had no internal calculation capabilities, or [[blitter]]s, which performed only basic memory movement operations. The modern GPU emerged during the 1990s, adding the ability to perform operations like drawing lines and text without [[Central processing unit|CPU]] help, and later adding 3D functionality.

Graphics functions are generally independent and this lends these tasks to being implemented on separate calculation engines. Modern GPUs include hundreds, or thousands, of calculation units. This made them useful for non-graphic calculations involving [[embarrassingly parallel]] problems due to their [[Parallel computing|parallel structure]]. The ability of GPUs to rapidly perform vast numbers of calculations has led to their adoption in diverse fields including [[artificial intelligence]] (AI) where they excel at handling data-intensive and computationally demanding tasks. Other non-graphical uses include the training of [[Artificial neural network#Training|neural networks]] and [[GPU mining|cryptocurrency mining]].

== History == {{See also|Video display controller|List of home computers by video hardware|Sprite (computer graphics)|Timeline of early 3D computer graphics hardware}}

=== 1960s – 1970s === [[File:Adage_Graphics_Terminal_brochure_-_crop.jpg|thumb|right|Adage Graphics terminal from 1968 brochure]] Dedicated 3D graphics hardware dates back to graphic [[computer terminal|terminals]] such as the [[Adage, Inc.|Adage]] AGT-30 from 1968 with [[matrix (mathematics)|matrix]] processors. Ikonas made graphics systems with 8- and 24-bit graphics and 3D acceleration in the late 70s.{{Cite book|title=The History of the GPU - Steps to Invention|edition=1st|author=Jon Peddie|publisher=Springer|year=2022|ISBN=978-3031109676|pages=424}}

[[Arcade system board]]s have used specialized 2D graphics circuits since the 1970s. In early video game hardware, [[Random-access memory|RAM]] for frame buffers was expensive, so video chips composited data together as the display was being scanned out on the monitor.{{cite web|last1=Hague|first1=James|title=Why Do Dedicated Game Consoles Exist?|url=https://prog21.dadgum.com/181.html|website=Programming in the 21st Century|date=September 10, 2013|url-status=dead|archive-url=https://web.archive.org/web/20150504042057/https://prog21.dadgum.com/181.html|archive-date=May 4, 2015|access-date=November 11, 2015}}

A specialized [[barrel shifter]] circuit helped the CPU animate the [[framebuffer]] graphics for various 1970s [[arcade video game]]s from [[Midway Games|Midway]] and [[Taito]], such as ''[[Gun Fight]]'' (1975), ''[[Sea Wolf (video game)|Sea Wolf]]'' (1976), and ''[[Space Invaders]]'' (1978).{{cite web|url=https://github.com/mamedev/mame/tree/master/src/mame/drivers/8080bw.c |archive-url=https://archive.today/20141121125813/https://github.com/mamedev/mame/tree/master/src/mame/drivers/8080bw.c |url-status=dead |archive-date=2014-11-21 |title=mame/8080bw.c at master 路 mamedev/mame 路 GitHub |work=GitHub }}

-

{{cite web|url=https://github.com/mamedev/mame/tree/master/src/mame/drivers/mw8080bw.c|archive-url=https://archive.today/20141121125812/https://github.com/mamedev/mame/tree/master/src/mame/drivers/mw8080bw.c|url-status=dead|archive-date=2014-11-21|title=mame/mw8080bw.c at master 路 mamedev/mame 路 GitHub|work=GitHub}}

-

{{cite web|url=https://www.computerarcheology.com/wiki/wiki/Arcade/SpaceInvaders|title=Arcade/SpaceInvaders – Computer Archeology|work=computerarcheology.com|url-status=dead|archive-url=https://web.archive.org/web/20140913080838/https://www.computerarcheology.com/wiki/wiki/Arcade/SpaceInvaders|archive-date=2014-09-13}} The [[Namco Galaxian]] arcade system in 1979 used specialized [[graphics hardware]] that supported [[RGB color model|RGB color]], multi-colored sprites, and [[Tile engine|tilemap]] backgrounds.{{cite web|url=https://github.com/mamedev/mame/tree/master/src/mame/video/galaxian.c|archive-url=https://archive.today/20141121114447/https://github.com/mamedev/mame/tree/master/src/mame/video/galaxian.c|url-status=dead|archive-date=2014-11-21|title=mame/galaxian.c at master 路 mamedev/mame 路 GitHub|work=GitHub}} The Galaxian hardware was widely used during the [[golden age of arcade video games]], by game companies such as [[Namco]], [[Centuri]], [[Gremlin Industries|Gremlin]], [[Irem]], [[Konami]], Midway, [[Nichibutsu]], [[Sega]], and [[Taito]].{{cite web |title=mame/galaxian.c at master 路 mamedev/mame 路 GitHub |url=https://github.com/mamedev/mame/tree/master/src/mame/drivers/galaxian.c |url-status=dead |archive-url=https://archive.today/20141121114529/https://github.com/mamedev/mame/tree/master/src/mame/drivers/galaxian.c |archive-date=2014-11-21 |work=GitHub}}

-

{{cite web |title=MAME – src/mame/drivers/galdrvr.c |url=https://mamedev.org/source/src/mame/drivers/galdrvr.c.html |archive-url=https://web.archive.org/web/20140103070737/https://mamedev.org/source/src/mame/drivers/galdrvr.c.html |archive-date=3 January 2014 }}

[[File:ANTIC chip on an Atari 130XE motherboard.jpg|thumb|upright=1.2|Atari [[ANTIC]] microprocessor on an Atari 130XE motherboard]] The [[Atari 2600]] in 1977 used a video shifter called the [[Television Interface Adaptor]].{{cite news|last1=Springmann|first1=Alessondra|title=Atari 2600 Teardown: What?s Inside Your Old Console?|url=https://www.washingtonpost.com/wp-dyn/content/article/2010/09/01/AR2010090103543.html|newspaper=The Washington Post|access-date=July 14, 2015|url-status=live|archive-url=https://web.archive.org/web/20150714082924/https://www.washingtonpost.com/wp-dyn/content/article/2010/09/01/AR2010090103543.html|archive-date=July 14, 2015}} [[Atari 8-bit computers]] (1979) had [[ANTIC]], a video processor which interpreted instructions describing a "[[display list]]"—the way the scan lines map to specific [[bitmapped]] or character modes and where the memory is stored (so there did not need to be a contiguous frame buffer).{{clarify|date=April 2023}}{{cite web|title=What are the 6502, ANTIC, CTIA/GTIA, POKEY, and FREDDIE chips?|url=https://www.atari8.com/node/31|website=Atari8.com|url-status=dead|archive-url=https://web.archive.org/web/20160305010645/https://www.atari8.com/node/31|archive-date=2016-03-05}} [[6502]] [[machine code]] [[subroutine]]s could be triggered on [[scan line]]s by setting a bit on a display list instruction.{{clarify|reason=passive voice is part of what makes this sentence hard to understand|date=April 2023}}{{cite journal|last1=Wiegers|first1=Karl E.|title=Atari Display List Interrupts|journal=Compute!|date=April 1984|issue=47|page=161|url=https://www.atarimagazines.com/compute/issue47/153_1_Atari_Display_List_Interrupts.php|url-status=live|archive-url=https://web.archive.org/web/20160304035625/https://www.atarimagazines.com/compute/issue47/153_1_Atari_Display_List_Interrupts.php|archive-date=2016-03-04}} ANTIC also supported smooth [[vertical scrolling|vertical]] and [[horizontal scrolling]] independent of the CPU.{{cite journal|last1=Wiegers|first1=Karl E.|title=Atari Fine Scrolling|journal=Compute!|date=December 1985|issue=67|page=110|url=https://www.atarimagazines.com/compute/issue67/338_1_Atari_Fine_Scrolling.php|url-status=live|archive-url=https://web.archive.org/web/20060216181611/https://www.atarimagazines.com/compute/issue67/338_1_Atari_Fine_Scrolling.php|archive-date=2006-02-16}}

=== 1980s === [[File:SGI GE25A Geometry Engine die.jpg|right|thumb|Geometry Engine integrated circuit]] In the 1980s significant advancements were made in professional 3D graphics hardware. Perhaps most impactful was the 1981 development of the [[Geometry Engine]], a [[VLSI]] [[vector (mathematics and physics)|vector]] processor [[ASIC]] designed by [[Jim Clark]] and [[Marc Hannah]] at [[Stanford University]]. This processor is the forerunner of modern [[tensor (mathematics)|tensor]] cores and other similar processors marketed for graphics and AI. The Geometry Engine went on to be used in [[Silicon Graphics Incorporated|Silicon Graphics]] [[workstations]] for many years. Silicon Graphics's first product, shipped in November 1983, was the IRIS 1000, a terminal with hardware-accelerated 3D graphics based on the Geometry Engine. The Geometry Engine was capable of approximately 6 million operations per second.{{cite pdf|title=The Geometry Engine:A VLSI Geometry System for Graphics|author=James H. Clark|publisher=[[Stanford University]]|date=1982|place=Palo Alto|url=https://graphics.stanford.edu/courses/cs148-10-summer/docs/1982--clark--geometry_engine.pdf}}

[[File:NEC D7220.jpg|thumb|upright=1.2|NEC [[μPD7220]]A]] The [[NEC μPD7220]] was the first implementation of a [[personal computer]] graphics display processor as a single [[large-scale integration]] (LSI) [[integrated circuit]] chip. This enabled the design of low-cost, high-performance video graphics cards such as those from [[Number Nine Visual Technology]]. It became the best-known GPU until the mid-1980s.{{Cite book |url=https://books.google.com/books?id=2j4hTAqxJ_sC&pg=PA169 |title=Advances in Computer Graphics II |publisher=Springer |year=1986 |isbn=9783540169109 |editor1=Hopgood |editor-first=F. Robert A. |page=169 |quote=Perhaps the best known one is the NEC 7220. |editor2=Hubbold |editor-first2=Roger J. |editor3=Duce |editor-first3=David A.}} It was the first fully integrated [[VLSI]] (very large-scale integration) [[metal–oxide–semiconductor]] ([[NMOS logic|NMOS]]) graphics display processor for PCs, supported up to [[XGA|1024×1024 resolution]], and laid the foundations for the PC graphics market. It was used in a number of graphics cards and was licensed for clones such as the Intel 82720, the first of [[List of Intel graphics processing units|Intel's graphics processing units]].{{Cite web |last=Anderson |first=Marian |date=2018-07-18 |title=Famous Graphics Chips: NEC μPD7220 Graphics Display Controller |url=https://www.computer.org/publications/tech-news/chasing-pixels/famous-graphics-chips/ |access-date=2023-10-17 |website=IEEE Computer Society |language=en-US}} The [[WMS Industries|Williams Electronics]] arcade games ''[[Robotron: 2084]]'', ''[[Joust (video game)|Joust]]'', ''[[Sinistar]]'', and ''[[Bubbles (video game)|Bubbles]]'', all released in 1982, contain custom [[blitter]] chips for operating on 16-color bitmaps.{{cite web|last1=Riddle|first1=Sean|title=Blitter Information|url=https://seanriddle.com/blitter.html|url-status=live|archive-url=https://web.archive.org/web/20151222155908/https://seanriddle.com/blitter.html|archive-date=2015-12-22}}{{cite book |last1=Wolf |first1=Mark J. P. |url=https://books.google.com/books?id=oK3D4i5ldKgC |title=Before the Crash: Early Video Game History |date=June 2012 |publisher=Wayne State University Press |isbn=978-0814337226 |page=185 |language=en-us}}

In 1984, [[Hitachi]] released the ARTC HD63484, the first major [[CMOS]] graphics processor for personal computers. The ARTC could display up to [[4K resolution]] when in [[monochrome]] mode. It was used in a number of graphics cards and terminals during the late 1980s.{{Cite web |last=Anderson |first=Marian |date=2018-10-07 |title=GPU History: Hitachi ARTC HD63484 |url=https://www.computer.org/publications/tech-news/chasing-pixels/gpu-history-hitachi-artc-hd63484/ |access-date=2023-10-17 |website=IEEE Computer Society |language=en-US}}

[[File:Commodore Amiga 1000 - main board - MOS 8367R0-7824.jpg|300px|thumb|MOS 8367R0 – Agnus]] In 1985, the [[Amiga]] was released with a custom graphics chip called [[MOS Technology Agnus|Agnus]] including a blitter for bitmap manipulation, line drawing, and area fill. It also included a [[coprocessor]] with its own simple instruction set, that was capable of manipulating graphics hardware registers in sync with the video beam (e.g. for per-scanline palette switches, [[sprite multiplexing]], and hardware windowing), or driving the blitter.{{Citation needed|date=February 2026}}

Also in 1985, IBM released the [[Professional Graphics Controller]] which was a rudimentary 3D card with {{resx|640|480}} 256-color graphics which used a dedicated CPU to draw graphics independently of the main system. It was cloned by a number of makers (including [[Matrox]]).

In 1986, [[Texas Instruments]] released the [[TMS34010]], the first fully programmable graphics processor.{{Cite web | url=https://www.computer.org/publications/tech-news/chasing-pixels/Famous-Graphics-Chips-IBMs-professional-graphics-the-PGC-and-8514A/Famous-Graphics-Chips-TI-TMS34010-and-VRAM |title = Famous Graphics Chips: TI TMS34010 and VRAM. The first programmable graphics processor chip | IEEE Computer Society| date=10 January 2019 }} It could run general-purpose code but also had a graphics-oriented instruction set. During 1990–1992, this chip became the basis of the [[Texas Instruments Graphics Architecture]] ("TIGA") [[Windows accelerator]] cards.

[[File:IBM 8514.jpg|thumb|upright=1.2|The [[IBM 8514]] Micro Channel adapter, with memory add-on]] Following in 1987, the [[IBM 8514]] graphics system was released. It was one of the first video cards for [[IBM PC compatible]]s that implemented [[fixed-function]] 2D primitives in [[electronic hardware]]. [[Sharp Corporation|Sharp]]'s [[X68000]], released in 1987, used a custom graphics chipset{{cite web |url=https://nfggames.com/games/x68k/ |title=X68000 |access-date=2014-09-12 |url-status=live |archive-url=https://web.archive.org/web/20140903010307/https://nfggames.com/games/x68k/ |archive-date=2014-09-03 }} with a 65,536 color palette and hardware support for sprites, scrolling, and multiple playfields.{{cite web |url=https://www.old-computers.com/museum/computer.asp?st=1&c=298 |title=museum ~ Sharp X68000 |publisher=Old-computers.com |access-date=2015-01-28 |url-status=dead |archive-url=https://web.archive.org/web/20150219114323/https://www.old-computers.com/museum/computer.asp?st=1&c=298 |archive-date=2015-02-19 }} It served as a development machine for [[Capcom]]'s [[CP System]] arcade board. Fujitsu's [[FM Towns]] computer, released in 1989, had support for a 16,777,216 color palette.{{cite web|url=https://www.hardcoregaming101.net/JPNcomputers/Japanesecomputers2.htm|title=Hardcore Gaming 101: Retro Japanese Computers: Gaming's Final Frontier|work=hardcoregaming101.net|url-status=live|archive-url=https://web.archive.org/web/20110113214919/https://www.hardcoregaming101.net/JPNcomputers/Japanesecomputers2.htm|archive-date=2011-01-13}}

[[File:IBM VGA 90X8941 on PS55.jpg|thumb|upright=1.2|[[Video Graphics Array|VGA]] section on the motherboard in [[IBM Personal System/55|IBM PS/55]] ]] For context, [[IBM]] also introduced its [[Video Graphics Array]] (VGA) display system in 1987, with a maximum resolution of {{resx|640|480}} pixels. Unlike 8514/A, VGA had no hardware acceleration features. In November 1988, [[NEC|NEC Home Electronics]] announced its creation of the [[Video Electronics Standards Association]] (VESA) to develop and promote a [[Super VGA]] (SVGA) [[computer display standard]] as a successor to VGA. Super VGA enabled [[graphics display resolution]]s up to {{resx|800|600}} [[pixel]]s, a 56% increase.{{cite news|title=NEC Forms Video Standards Group|first=Mark |last=Brownstein|work=[[InfoWorld]] |issn=0199-6649|date=November 14, 1988|volume=10 |issue=46 |page=3|access-date=May 27, 2016|url=https://books.google.com/books?id=wTsEAAAAMBAJ&pg=PT2}}

In 1988 SGI sold IRIS workstation graphics with 10-12 Geometry Engines and introduced the [[IrisVision]] add-in board for IBM MicroChannel bus ([[IBM RS/6000|RS/6000]]) based on the Geometry Engine as well.

In 1988 as well, the first dedicated [[3D computer graphics|polygonal 3D]] graphics boards in arcade machines were introduced with the [[Namco System 21]]{{cite web |title=System 16 – Namco System 21 Hardware (Namco) |url=https://www.system16.com/hardware.php?id=536 |url-status=live |archive-url=https://web.archive.org/web/20150518005344/https://system16.com/hardware.php?id=536 |archive-date=2015-05-18 |work=system16.com}} and [[Taito]] Air System.{{cite web |title=System 16 – Taito Air System Hardware (Taito) |url=https://www.system16.com/hardware.php?id=656 |url-status=live |archive-url=https://web.archive.org/web/20150316214310/https://system16.com/hardware.php?id=656 |archive-date=2015-03-16 |work=system16.com}}

=== 1990s {{anchor|GUI accelerator}}=== [[File:Dstealth32.jpg|thumb|upright=1.2|[[Tseng Labs]] [[Tseng Labs ET4000|ET4000/W32p]]]] [[File:DIAMONDSTEALTH3D2000-top.JPG|thumb|upright=1.2|[[S3 Graphics]] [[S3 ViRGE|ViRGE]]]] [[File:Voodoo3-2000AGP.jpg|thumb|upright=1.2|[[Voodoo3]] 2000 AGP card]] The 1990s again saw considerable advancements in professional workstation 3D graphics hardware from Sun Microsystems, SGI, and others. The introduction of [[OpenGL]] by SGI in 1992 paved the way for standard hardware-independent 3D programming interfaces.https://developer.nvidia.com/opengl{{Cite web|title=Silicon Graphics: Gone But Not Forgotten|author=Cal Jeffrey|date=November 10, 2022|work=Techspot|url=https://www.techspot.com/article/2142-silicon-graphics/}} However by the mid and late 90s, professional hardware was being slowly eclipsed by consumer products which offered similar or even better performance, especially in regards to texture mapping, at a lower cost and on platforms familiar to end users.{{cite web|url=https://quantumzeitgeist.com/what-happened-to-the-silicon-graphics-company/|title=What Happened to the Silicon Graphics Company?|date=July 5, 2024|publisher=Quantum Zeitgeist}}

In 1991, [[S3 Graphics]] introduced the ''[[S3 Graphics|S3 86C911]]'', which its designers named after the [[Porsche 911]] as an indication of the performance increase it promised.{{cite journal|title=S3 Video Boards|journal=InfoWorld|date=May 18, 1992|volume=14|issue=20|page=62|url=https://books.google.com/books?id=XlEEAAAAMBAJ&q=S3+86C911&pg=PA62|access-date=July 13, 2015|url-status=live|archive-url=https://web.archive.org/web/20171122191720/https://books.google.com/books?id=XlEEAAAAMBAJ&pg=PA62&dq=S3+86C911&hl=en&sa=X&ved=0CBwQ6AEwAGoVChMI85bTh7XYxgIVyyeUCh3Z_wYp#v=onepage&q=S3%2086C911&f=false|archive-date=November 22, 2017}} The 86C911 spawned a variety of imitators: by 1995, all major PC graphics chip makers had added [[2D computer graphics|2D]] acceleration support to their chips.{{cite journal|title=What the numbers mean|journal=PC Magazine|date=23 February 1993|volume=12|page=128|url=https://books.google.com/books?id=4RN8nH8oZ2QC&pg=128|access-date=29 March 2016|url-status=live|archive-url=https://web.archive.org/web/20170411172653/https://books.google.com/books?id=4RN8nH8oZ2QC&pg=128|archive-date=11 April 2017}}

- {{cite web|last1=Singer|first1=Graham|title=The History of the Modern Graphics Processor|url=https://www.techspot.com/article/650-history-of-the-gpu/|publisher=Techspot|access-date=29 March 2016|url-status=live|archive-url=https://web.archive.org/web/20160329140009/https://www.techspot.com/article/650-history-of-the-gpu/|archive-date=29 March 2016}} Fixed-function ''Windows accelerators'' surpassed expensive general-purpose graphics coprocessors in Windows performance, and such coprocessors faded from the PC market.

In the early- and mid-1990s, [[real-time computer graphics|real-time]] 3D graphics became increasingly common in arcade, computer, and console games, which led to increasing public demand for hardware-accelerated 3D graphics. Early examples of mass-market 3D graphics hardware can be found in arcade system boards such as the [[Sega Model 1]], [[Namco System 22]], and [[Sega Model 2]], and the [[History of video game consoles (fifth generation)|fifth-generation video game consoles]] such as the [[Sega Saturn|Saturn]], [[PlayStation (console)|PlayStation]], and [[Nintendo 64]]. Arcade systems such as the Sega Model 2 and [[Silicon Graphics|SGI]] [[SGI Onyx|Onyx]]-based Namco Magic Edge Hornet Simulator in 1993 were capable of hardware T&L ([[transform, clipping, and lighting]]) years before appearing in consumer graphics cards.{{cite web |title=System 16 – Namco Magic Edge Hornet Simulator Hardware (Namco) |url=https://www.system16.com/hardware.php?id=832 |url-status=live |archive-url=https://web.archive.org/web/20140912000953/https://www.system16.com/hardware.php?id=832 |archive-date=2014-09-12 |work=system16.com}}{{cite web |title=MAME – src/mame/video/model2.c |url=https://mamedev.org/source/src/mame/video/model2.c.html |archive-url=https://web.archive.org/web/20130104200822/https://mamedev.org/source/src/mame/video/model2.c.html |archive-date=4 January 2013 }} Another early example is the [[Super FX]] chip, a [[Reduced instruction set computer|RISC]]-based [[ROM cartridge#Use in hardware enhancements|on-cartridge graphics chip]] used in some [[Super Nintendo Entertainment System|SNES]] games, notably ''[[List of Doom ports#Super NES|Doom]]'' and ''[[Star Fox (1993 video game)|Star Fox]]''. Some systems used [[Digital signal processor|DSPs]] to accelerate transformations. [[Fujitsu]], which worked on the Sega Model 2 arcade system,{{cite web |title=System 16 – Sega Model 2 Hardware (Sega) |url=https://www.system16.com/hardware.php?id=713 |url-status=live |archive-url=https://web.archive.org/web/20101221001009/https://system16.com/hardware.php?id=713 |archive-date=2010-12-21 |work=system16.com}} began working on integrating T&L into a single [[Integrated circuit|LSI]] solution for use in home computers in 1995;{{cite web |url=https://www.hotchips.org/wp-content/uploads/hc_archives/hc07/3_Tue/HC7.S5/HC7.5.1.pdf |title=3D Graphics Processor Chip Set |access-date=2016-08-08 |url-status=dead |archive-url=https://web.archive.org/web/20161011194640/https://www.hotchips.org/wp-content/uploads/hc_archives/hc07/3_Tue/HC7.S5/HC7.5.1.pdf |archive-date=2016-10-11 }}

- {{cite web |url=https://www.fujitsu.com/downloads/MAG/vol33-2/paper08.pdf |title=3D-CG System with Video Texturing for Personal Computers |access-date=2016-08-08 |url-status=dead |archive-url=https://web.archive.org/web/20140906132147/https://www.fujitsu.com/downloads/MAG/vol33-2/paper08.pdf |archive-date=2014-09-06 }} the Fujitsu Pinolite, the first 3D geometry processor for personal computers, announced in 1997.{{cite web|url=https://pr.fujitsu.com/jp/news/1997/Jul/2e.html|title=Fujitsu Develops World's First Three Dimensional Geometry Processor|work=fujitsu.com|url-status=live|archive-url=https://web.archive.org/web/20140912004938/https://pr.fujitsu.com/jp/news/1997/Jul/2e.html|archive-date=2014-09-12}} The first hardware T&L GPU on [[Home console|home]] [[video game console]]s was the [[Nintendo 64]]'s [[Reality Coprocessor]], released in 1996.{{cite web |title=The Nintendo 64 is one of the greatest gaming devices of all time |url=https://xenol.kinja.com/the-nintendo-64-is-one-of-the-greatest-gaming-devices-o-1722364688 |url-status=dead |archive-url=https://web.archive.org/web/20151118044413/https://xenol.kinja.com/the-nintendo-64-is-one-of-the-greatest-gaming-devices-o-1722364688 |archive-date=2015-11-18 |work=xenol}} In 1997, [[Mitsubishi]] released the [[AMD FirePro|3Dpro/2MP]], a GPU capable of transformation and lighting, for [[workstation]]s and [[Windows NT]] desktops;{{Cite web|url=https://www.thefreelibrary.com/Mitsubishi%27s+3DPro/2mp+Chipset+Sets+New+Records+for+Fastest+3D...-a019465188|title=Mitsubishi's 3DPro/2mp Chipset Sets New Records for Fastest 3D Graphics Accelerator for Windows NT Systems; 3DPro/2mp grabs Viewperf performance lead; other high-end benchmark tests clearly show that 3DPro's performance outdistances all Windows NT competitors.|access-date=2022-02-18|archive-date=2018-11-15|archive-url=https://web.archive.org/web/20181115235023/https://www.thefreelibrary.com/Mitsubishi%27s+3DPro%2f2mp+Chipset+Sets+New+Records+for+Fastest+3D...-a019465188|url-status=dead}} [[ATi]] used it for its [[FireGL|FireGL 4000]] [[graphics card]], released in 1997.{{cite web |author=Vlask |title=VGA Legacy MKIII – Diamond Fire GL 4000 (Mitsubishi 3DPro/2mp) |url=https://vgamuseum.info/index.php/component/k2/item/547-diamond-fire-gl-4000-mitsubishi-3dpro-2mp |url-status=live |archive-url=https://web.archive.org/web/20151118114320/https://vgamuseum.info/index.php/component/k2/item/547-diamond-fire-gl-4000-mitsubishi-3dpro-2mp |archive-date=2015-11-18}}

The term "GPU" was coined by [[Sony]] in reference to the 32-bit [[PlayStation technical specifications|Sony GPU]] (designed by [[Toshiba]]) in the [[PlayStation (console)|PlayStation]] video game console, released in 1994.{{Cite web | url=https://www.computer.org/publications/tech-news/chasing-pixels/is-it-time-to-rename-the-gpu |title = Is it Time to Rename the GPU? | IEEE Computer Society| date=17 July 2018 }}

=== 2000s === In October 2002, with the introduction of the [[ATI Technologies|ATI]] ''[[Radeon 9700 core|Radeon 9700]]'' (also known as R300), the world's first [[Direct3D]] 9.0 accelerator, pixel and vertex [[Shader|shaders]] could implement [[Loop (computing)|looping]] and lengthy [[floating point]] math, and were quickly becoming as flexible as CPUs, yet orders of magnitude faster for image-array operations. Pixel shading is often used for [[bump mapping]], which adds texture to make an object look shiny, dull, rough, or even round or extruded.{{cite web | url = https://www.blacksmith-studios.dk/projects/downloads/bumpmapping_using_cg.php | title = Bump Mapping Using CG (3rd Edition) | first = Søren | last = Dreijer | access-date = 2007-05-30 | url-status = dead | archive-url = https://web.archive.org/web/20100120195901/http://www.blacksmith-studios.dk/projects/downloads/bumpmapping_using_cg.php | archive-date = 2010-01-20 }}

With the introduction of the Nvidia [[GeForce 8 series]] and new generic stream processing units, GPUs became more generalized computing devices. [[Parallel computing|Parallel]] GPUs are making computational inroads against the CPU, and a subfield of research, dubbed GPU computing or [[GPGPU]] for ''general purpose computing on GPU'', has found applications in fields as diverse as [[machine learning]],{{cite book|chapter=Large-scale deep unsupervised learning using graphics processors |doi=10.1145/1553374.1553486 |publisher=Dl.acm.org |date=2009-06-14 |title=Proceedings of the 26th Annual International Conference on Machine Learning – ICML '09 |pages=1–8 |last1=Raina |first1=Rajat |last2=Madhavan |first2=Anand |last3=Ng |first3=Andrew Y. |s2cid=392458 |isbn=9781605585161 }} [[oil exploration]], scientific [[image processing]], [[linear algebra]],[https://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.94.1988&rep=rep1&type=pdf "Linear algebra operators for GPU implementation of numerical algorithms"], Kruger and Westermann, International Conference on Computer Graphics and Interactive Techniques, 2005 [[statistics]],{{cite journal |title=ABC-SysBio—approximate Bayesian computation in Python with GPU support |last=Liepe |display-authors=etal |journal=Bioinformatics |year=2010 |volume=26 |issue=14 |pages=1797–1799 |doi=10.1093/bioinformatics/btq278 |pmid=20591907 |pmc=2894518 |url=https://bioinformatics.oxfordjournals.org/content/26/14/1797.full |access-date=2010-10-15 |url-status=dead |archive-url=https://web.archive.org/web/20151105144736/https://bioinformatics.oxfordjournals.org/content/26/14/1797.full |archive-date=2015-11-05 }} [[3D reconstruction]], and [[stock options]] pricing. GPGPUs were the precursors to what is now called a compute shader (e.g. [[CUDA]], [[OpenCL]], [[DirectCompute]]) and actually abused the hardware to a degree by treating the data passed to algorithms as texture maps and executing algorithms by drawing a triangle or quad with an appropriate pixel shader.{{clarify|date=April 2023}} This entails some overheads since units like the [[Rasterization|scan converter]] are involved where they are not needed (nor are triangle manipulations even a concern—except to invoke the pixel shader).{{clarify|date=April 2023}}

Nvidia's CUDA platform, first introduced in 2007,{{Cite book|url=https://books.google.com/books?id=49OmnOmTEtQC|title=CUDA by Example: An Introduction to General-Purpose GPU Programming, Portable Documents|last1=Sanders|first1=Jason|last2=Kandrot|first2=Edward|date=2010-07-19|publisher=Addison-Wesley Professional|isbn=9780132180139|language=en|url-status=live|archive-url=https://web.archive.org/web/20170412034641/https://books.google.com/books?id=49OmnOmTEtQC|archive-date=2017-04-12}} was the earliest widely adopted programming model for GPU computing. OpenCL is an open standard defined by the [[Khronos Group]] that allows for the development of code for both GPUs and CPUs with an emphasis on portability.{{cite web|url=https://www.khronos.org/opencl/|title=OpenCL – The open standard for parallel programming of heterogeneous systems|work=khronos.org|url-status=live|archive-url=https://web.archive.org/web/20110809103233/https://www.khronos.org/opencl/|archive-date=2011-08-09}} OpenCL solutions are supported by Intel, AMD, Nvidia, and ARM, and according to a report in 2011 by [[Evans Data Corporation|Evans Data]], OpenCL had become the second most popular HPC tool.{{Cite web |last=Handy |first=Alex |date=2011-09-28 |title=AMD helps OpenCL gain ground in HPC space |url=https://sdtimes.com/amd/amd-helps-opencl-gain-ground-in-hpc-space/ |access-date=2023-06-04 |website=SD Times |language=en-US}}

=== 2010s === In 2010, Nvidia partnered with [[Audi]] to power their cars' dashboards, using the [[Tegra]] GPU to provide increased functionality to cars' navigation and entertainment systems.{{Cite web|url=https://news.softpedia.com/news/NVIDIA-Tegra-Inside-Every-Audi-2010-Vehicle-131529.shtml|title=NVIDIA Tegra Inside Every Audi 2010 Vehicle|last=Teglet|first=Traian|date=8 January 2010|access-date=2016-08-03|url-status=live|archive-url=https://web.archive.org/web/20161004185422/https://news.softpedia.com/news/NVIDIA-Tegra-Inside-Every-Audi-2010-Vehicle-131529.shtml|archive-date=2016-10-04}} Advances in GPU technology in cars helped advance [[autonomous car|self-driving technology]].{{Cite web|url=https://www.digitaltrends.com/cars/nvidia-gpu-driverless-car/|title=School's in session – Nvidia's driverless system learns by watching|date=2016-04-30|language=en-US|access-date=2016-08-03|url-status=live|archive-url=https://web.archive.org/web/20160501203712/https://www.digitaltrends.com/cars/nvidia-gpu-driverless-car/|archive-date=2016-05-01}} AMD's [[Radeon HD 6000 series]] cards were released in 2010, and in 2011 AMD released its 6000M Series discrete GPUs for mobile devices.{{Cite web |title=AMD Radeon HD 6000M series – don't call it ATI! |url=https://www.cnet.com/news/amd-radeon-hd-6000m-series-dont-call-it-ati/ |url-status=live |archive-url=https://web.archive.org/web/20161011195008/https://www.cnet.com/news/amd-radeon-hd-6000m-series-dont-call-it-ati/ |archive-date=2016-10-11 |access-date=2016-08-03 |website=CNET}} The [[Kepler (microarchitecture)|Kepler line]] of graphics cards by Nvidia were released in 2012 and were used in the Nvidia 600 and 700 series cards. A feature in this GPU microarchitecture included GPU boost, a technology that adjusts the clock-speed of a video card to increase or decrease according to its power draw.{{Cite web|url=https://www.bit-tech.net/hardware/2012/03/22/nvidia-geforce-gtx-680-2gb-review/4|title=Nvidia GeForce GTX 680 2GB Review|access-date=2016-08-03|url-status=live|archive-url=https://web.archive.org/web/20160911210258/https://www.bit-tech.net/hardware/2012/03/22/nvidia-geforce-gtx-680-2gb-review/4|archive-date=2016-09-11}} Kepler also introduced [[NVENC]] video encoding acceleration technology.

The [[PlayStation 4 technical specifications|PS4]] and [[Xbox One]] were released in 2013; they both used GPUs based on [[Radeon HD 7000 series|AMD's Radeon HD 7850 and 7790]].{{Cite web|url=https://www.extremetech.com/gaming/156273-xbox-720-vs-ps4-vs-pc-how-the-hardware-specs-compare|title=Xbox One vs. PlayStation 4: Which game console is best? |website=ExtremeTech|date=20 November 2015 |access-date=2019-05-13}} Nvidia's Kepler line of GPUs was followed by the [[Maxwell (microarchitecture)|Maxwell]] line, manufactured on the same process. Nvidia's 28 nm chips were manufactured by [[TSMC]] in Taiwan using the 28 nm process. Compared to the 40 nm technology from the past, this manufacturing process allowed a 20 percent boost in performance while drawing less power.{{Cite web|url=https://www.nvidia.com/content/PDF/kepler/NVIDIA-Kepler-GK110-Architecture-Whitepaper.pdf|title=Kepler TM GK110|date=2012|publisher=NVIDIA Corporation|access-date=August 3, 2016|url-status=live|archive-url=https://web.archive.org/web/20161011194501/https://www.nvidia.com/content/PDF/kepler/NVIDIA-Kepler-GK110-Architecture-Whitepaper.pdf|archive-date=October 11, 2016}}{{Cite web|url=https://www.tsmc.com/english/dedicatedFoundry/technology/28nm.htm|title=Taiwan Semiconductor Manufacturing Company Limited|website=www.tsmc.com|access-date=2016-08-03|url-status=live|archive-url=https://web.archive.org/web/20160810092021/https://www.tsmc.com/english/dedicatedFoundry/technology/28nm.htm|archive-date=2016-08-10}} [[Virtual reality headset]]s have high system requirements; manufacturers recommended the GTX 970 and the R9 290X or better at the time of their release.{{Cite web|url=https://www.octopusrift.com/building-a-vive-pc/|title=Building a PC for the HTC Vive|date=2016-06-16|access-date=2016-08-03|url-status=live|archive-url=https://web.archive.org/web/20160729024408/https://www.octopusrift.com/building-a-vive-pc/|archive-date=2016-07-29}}{{Cite web|url=https://www.vive.com/ready/|title=VIVE Ready Computers|publisher=Vive|access-date=2021-07-30|url-status=live|archive-url=https://web.archive.org/web/20160224182407/https://www.htcvive.com/us/product-optimized/|archive-date=2016-02-24}} Cards based on the [[Pascal (microarchitecture)|Pascal]] microarchitecture were released in 2016. The [[GeForce 10 series]] of cards are of this generation of graphics cards. They are made using the 16 nm manufacturing process which improves upon previous microarchitectures.{{Cite web|url=https://www.pcworld.com/article/3052312/components-graphics/nvidias-monstrous-pascal-gpu-is-packed-with-cutting-edge-tech-and-15-billion-transistors.html|title=Nvidia's monstrous Pascal GPU is packed with cutting-edge tech and 15 billion transistors|date=5 April 2016|access-date=2016-08-03|url-status=live|archive-url=https://web.archive.org/web/20160731220844/https://www.pcworld.com/article/3052312/components-graphics/nvidias-monstrous-pascal-gpu-is-packed-with-cutting-edge-tech-and-15-billion-transistors.html|archive-date=2016-07-31}}

In 2018, Nvidia launched the RTX 20 series GPUs that added [[Ray tracing (graphics)|ray tracing]] cores to GPUs, allowing real time ray tracing to be performant on mass market hardware.{{cite web |last1=Sarkar |first1=Samit |title=Nvidia RTX 2070, RTX 2080, RTX 2080 Ti GPUs revealed: specs, price, release date |url=https://www.polygon.com/2018/8/20/17760038/nvidia-geforce-rtx-2080-ti-2070-specs-release-date-price-turing |website=Polygon |date=20 August 2018 |access-date=11 September 2019}} [[Polaris 11]] and [[Polaris 10]] GPUs from AMD are fabricated by a 14 nm process. Their release resulted in a substantial increase in the performance per watt of AMD video cards.{{Cite web|url=https://wccftech.com/amd-unveils-polaris-11-10-gpu/|title=AMD RX 480, 470 & 460 Polaris GPUs To Deliver The 'Most Revolutionary Jump In Performance' Yet|date=2016-01-16|language=en-US|access-date=2016-08-03|url-status=live|archive-url=https://web.archive.org/web/20160801225420/https://wccftech.com/amd-unveils-polaris-11-10-gpu/|archive-date=2016-08-01}} AMD also released the Vega GPU series for the high end market as a competitor to Nvidia's high end Pascal cards, also featuring [[High Bandwidth Memory|HBM2]] like the Titan V.{{Citation needed|date=February 2026}}

In 2019, AMD released the successor to their [[Graphics Core Next]] (GCN) microarchitecture/instruction set. Dubbed [[RDNA (microarchitecture)|RDNA]], the first product featuring it was the [[Radeon RX 5000 series]] of video cards.AMD press release: {{cite web|url=https://www.amd.com/en/press-releases/2019-05-26-amd-announces-next-generation-leadership-products-computex-2019-keynote|title=AMD Announces Next-Generation Leadership Products at Computex 2019 Keynote |publisher=AMD |access-date=October 5, 2019}} The company announced that the successor to the RDNA microarchitecture would be incremental (a "refresh"). AMD unveiled the [[Radeon RX 6000 series]], its [[RDNA 2]] graphics cards with support for hardware-accelerated ray tracing.{{cite web|url=https://www.tomshardware.com/news/amds-navi-to-be-refreshed-with-next-gen-rdna-architecture-in-2020|title=AMD to Introduce New Next-Gen RDNA GPUs in 2020, Not a Typical 'Refresh' of Navi|date=2020-01-29|website=[[Tom's Hardware]]|access-date=2020-02-08}}

- {{cite web|url=https://www.tweaktown.com/news/75066/amd-to-reveal-next-gen-big-navi-rdna-2-graphics-cards-on-october-28/index.html|title=AMD to reveal next-gen Big Navi RDNA 2 graphics cards on October 28|last=Garreffa|first=Anthony|work=TweakTown|date=September 9, 2020|access-date=September 9, 2020}}

- {{cite web|url=https://www.theverge.com/2020/9/9/21429127/amd-zen-3-cpu-big-navi-gpu-events-october|title=AMD's next-generation Zen 3 CPUs and Radeon RX 6000 'Big Navi' GPU will be revealed next month|last=Lyles|first=Taylor|work=The Verge|date=September 9, 2020|access-date=September 10, 2020}} The product series, launched in late 2020, consisted of the RX 6800, RX 6800 XT, and RX 6900 XT.{{cite web|url=https://www.anandtech.com/show/16150/amd-teases-radeon-rx-6000-card-performance-numbers-aiming-for-3080|title=AMD Teases Radeon RX 6000 Card Performance Numbers: Aiming For 3080?|website=[[AnandTech]]|date=2020-10-08|access-date=2020-10-25|archive-date=2020-10-08|archive-url=https://web.archive.org/web/20201008200930/https://www.anandtech.com/show/16150/amd-teases-radeon-rx-6000-card-performance-numbers-aiming-for-3080|url-status=dead}}

- {{cite web|url=https://www.anandtech.com/show/16077/amd-announces-ryzen-zen-3-and-radeon-rdna2-presentations-for-october-a-new-journey-begins|title=AMD Announces Ryzen 'Zen 3' and Radeon 'RDNA2' Presentations for October: A New Journey Begins|website=[[AnandTech]]|date=2020-09-09|access-date=2020-10-25|archive-date=2020-09-10|archive-url=https://web.archive.org/web/20200910074941/https://www.anandtech.com/show/16077/amd-announces-ryzen-zen-3-and-radeon-rdna2-presentations-for-october-a-new-journey-begins|url-status=dead}}{{cite web|url=https://www.eurogamer.net/articles/digitalfoundry-2020-10-28-amd-unveils-three-radeon-6000-graphics-cards-with-ray-tracing-and-impressive-performance|title=AMD unveils three Radeon 6000 graphics cards with ray tracing and RTX-beating performance|last=Judd|first=Will|work=Eurogamer|date=October 28, 2020|access-date=October 28, 2020}} The RX 6700 XT, which is based on Navi 22, was launched in early 2021.{{Cite web|last=Mujtaba|first=Hassan|date=2020-11-30|title=AMD Radeon RX 6700 XT 'Navi 22 GPU' Custom Models Reportedly Boost Up To 2.95 GHz|url=https://wccftech.com/amd-radeon-rx-6700-xt-custom-models-boost-up-to-2-95-ghz-220w-tgp/|access-date=2020-12-03|website=Wccftech|language=en-US}}

- {{Cite web|last=Tyson|first=Mark|date=December 3, 2020|title=AMD CEO keynote scheduled for CES 2020 on 12th January|url=https://hexus.net/tech/news/industry/147068-amd-ceo-keynote-scheduled-ces-2020-12th-january/|access-date=2020-12-03|website=HEXUS}}

- {{cite web|url=https://www.anandtech.com/show/16402/amd-to-launch-midrange-rdna-2-desktop-graphics-in-first-half-2021|title=AMD to Launch Mid-Range RDNA 2 Desktop Graphics in First Half 2021|last=Cutress|first=Ian|work=AnandTech|date=January 12, 2021|access-date=January 4, 2021|archive-date=January 12, 2021|archive-url=https://web.archive.org/web/20210112170839/https://www.anandtech.com/show/16402/amd-to-launch-midrange-rdna-2-desktop-graphics-in-first-half-2021|url-status=dead}}

The [[PlayStation 5]] and [[Xbox Series X and Series S]] were released in 2020; they both use GPUs based on the RDNA 2 microarchitecture with incremental improvements and different GPU configurations in each system's implementation.{{Cite web|url=https://hothardware.com/news/sony-playstation-5-independent-teardown-ifixit|title=Sony PS5 Gets A Full Teardown Detailing Its RDNA 2 Guts And Glory|last=Funk|first=Ben|website=Hot Hardware|date=December 12, 2020|access-date=January 3, 2021|archive-date=December 12, 2020|archive-url=https://web.archive.org/web/20201212165054/https://hothardware.com/news/sony-playstation-5-independent-teardown-ifixit|url-status=dead}}{{cite web|url=https://www.theverge.com/2020/3/18/21183181/sony-ps5-playstation-5-specs-details-hardware-processor-8k-ray-tracing|title=Sony reveals full PS5 hardware specifications|last=Gartenberg|first=Chaim|work=[[The Verge]]|date=March 18, 2020|access-date=January 3, 2021}}{{Cite web|url=https://www.anandtech.com/show/15546/microsoft-drops-more-xbox-series-x-tech-specs-zen-2-rdna-2-12-tflops-gpu-hdmi-21-a-custom-ssd|archive-url=https://web.archive.org/web/20200224202004/https://www.anandtech.com/show/15546/microsoft-drops-more-xbox-series-x-tech-specs-zen-2-rdna-2-12-tflops-gpu-hdmi-21-a-custom-ssd|url-status=dead|archive-date=February 24, 2020|title=Microsoft Drops More Xbox Series X Tech Specs: Zen 2 + RDNA 2, 12 TFLOPs GPU, HDMI 2.1, & a Custom SSD|last=Smith|first=Ryan|website=AnandTech|access-date=2020-03-19}}

=== 2020s === {{See also|AI accelerator}}

In the 2020s, GPUs have been increasingly used for calculations involving [[embarrassingly parallel]] problems, such as training of [[Artificial neural network#Training|neural networks]] on enormous datasets that are needed for artificial intelligence [[large language model]]s. Specialized processing cores on most modern GPUs that are dedicated to [[deep learning]] provide significant [[Floating point operations per second|FLOPS]] performance increases, using 4×4 matrix multiplication and division. Early implementations, such as Nvidia's [[Volta (microarchitecture)|Volta]] microarchitecture, released in 2017{{Cite web |title=NVIDIA Volta AI Architecture |url=https://www.nvidia.com/en-us/data-center/volta-gpu-architecture/ |access-date=2026-03-03 |website=NVIDIA |language=en-us}}, saw results of up to 128 TFLOPS in some applications.{{cite web |last1=Smith |first1=Ryan |title=NVIDIA Volta Unveiled: GV100 GPU and Tesla V100 Accelerator Announced |url=https://www.anandtech.com/show/11367/nvidia-volta-unveiled-gv100-gpu-and-tesla-v100-accelerator-announced |archive-url=https://web.archive.org/web/20170511021038/http://www.anandtech.com/show/11367/nvidia-volta-unveiled-gv100-gpu-and-tesla-v100-accelerator-announced |url-status=dead |archive-date=May 11, 2017 |website=AnandTech |access-date=16 August 2018}}

Since then, AI Acceleration cores have been a widely adopted feature in consumer and workstation microarchitectures starting with Nvidia's [[Turing (microarchitecture)|Turing]] microarchitecture in 2018, named Tensor cores. Originally used for [[Deep Learning Super Sampling]] to enhance gaming performance and improve image quality, they have since been used in Nvidia's [https://www.nvidia.com/en-us/geforce/broadcasting/broadcast-app/ Broadcast] software to provide many AI powered effects such as voice filtering and video noise removal.

AMD originally implemented their equivalent "Matrix" Cores for consumers in their [[RDNA 3]] architecture, while Intel has implemented their equivalent "XMX" Cores in all of their [[Intel Arc|Arc]] GPUs, starting with the [[Intel Arc#Alchemist|Alchemist]] microarchitecture.

== GPU companies == {{main|List of graphics chips and card companies}}

Many companies have produced GPUs under a number of brand names. In 2009,{{Needs update|date=October 2023|reason=This claim was made in 2009, and updated statistics are available}} [[Intel Corporation|Intel]], [[Nvidia]], and [[Advanced Micro Devices|AMD]]/[[ATI Technologies|ATI]] were the market share leaders, with 49.4%, 27.8%, and 20.6% market share respectively. In addition, [[Matrox]]{{cite web |title=Matrox Graphics – Products – Graphics Cards |url=https://www.matrox.com/graphics/en/products/graphics_cards |url-status=live |archive-url=https://web.archive.org/web/20140205042218/https://www.matrox.com/graphics/en/products/graphics_cards/ |archive-date=2014-02-05 |access-date=2014-01-21 |publisher=Matrox.com}} produces GPUs. Chinese companies such as [[Jingjia Micro]] have also produced GPUs for the domestic market although in terms of worldwide sales, they lag behind market leaders.{{Cite web |last=Pan |first=Che |date=31 July 2023 |title=Blacklisted Jingjia Micro to develop GPUs in Wuxi in latest chip self sufficiency move |url=https://www.scmp.com/tech/big-tech/article/3229526/tech-war-us-sanctioned-chip-maker-jingjia-micro-develop-gpus-wuxi-latest-sign-chinas-self |access-date=20 January 2025 |website=South China Morning Post |language=en}}

==Computational functions== {{anchor|Compute unit|Streaming multiprocessor}}Several factors of GPU construction affect the performance of the card for real-time rendering, such as the size of the connector pathways in the [[semiconductor device fabrication]], the [[clock signal]] frequency, and the number and size of various on-chip memory [[CPU cache|caches]]. Performance is also affected by the number of streaming multiprocessors (SM) for NVidia GPUs, or compute units (CU) for AMD GPUs, or Xe cores for Intel Xe based GPUs, which describe the number of on-silicon processor core units within the GPU chip that perform the core calculations, typically working in parallel with other SM/CUs on the GPU. GPU performance is typically measured in floating point operations per second ([[FLOPS]]); GPUs in the 2010s and 2020s typically deliver performance measured in teraflops (TFLOPS). This is an estimated performance measure, as other factors can affect the actual display rate.{{cite web | url = https://www.extremetech.com/gaming/269335-how-graphics-cards-work | title = How Do Graphics Cards Work? | first = Joel | last = Hruska | date = February 10, 2021 | access-date = July 17, 2021 | work = [[Extreme Tech]] }}[[Image:AMD HD5470 GPU.JPG|thumb|The ATI HD5470 GPU (above, with copper [[heatpipe]] attached) features [[UVD]] 2.1 which enables it to decode AVC and VC-1 video formats.]] ===2D graphics APIs=== An earlier GPU may support one or more 2D graphics APIs for 2D acceleration, such as [[Graphics Device Interface|GDI]] and [[DirectDraw]].{{citation |url=https://theretroweb.com/chip/documentation/cl-gd5446-smol-645654db1ae03286068933.pdf |title=CL-GD5446 64-bit VisualMedia Accelerator Preliminary Data Book |publisher=Cirrus Logic |date=November 1996 |access-date=30 January 2024 |via=The Datasheet Archive}}

==GPU forms== ===Terminology=== In the 1970s, the term "GPU" originally stood for ''graphics processor unit'' and described a programmable processing unit working independently from the CPU that was responsible for graphics manipulation and output.{{cite book |last1=Barron |first1=E. T. |title=Conference record of the 6th annual workshop on Microprogramming – MICRO 6 |last2=Glorioso |first2=R. M. |date=September 1973 |isbn=9781450377836 |pages=122–128 |language=en |chapter=A micro controlled peripheral processor |doi=10.1145/800203.806247 |doi-access=free |s2cid=36942876}}{{cite journal |last1=Levine |first1=Ken |title=Core standard graphic package for the VGI 3400 |journal=ACM SIGGRAPH Computer Graphics |date=August 1978 |volume=12 |issue=3 |pages=298–300 |doi=10.1145/965139.807405 |url=https://dl.acm.org/doi/abs/10.1145/965139.807405|url-access=subscription }} In 1994, [[Sony]] used the term (with the meaning ''graphics processing unit'') in reference to the [[PlayStation (console)|PlayStation]] console's [[Toshiba]]-designed [[PlayStation technical specifications#Graphics processing unit (GPU)|Sony GPU]]. The term was popularized by [[Nvidia]] in 1999, who marketed the [[GeForce 256]] as "the world's first GPU".{{cite web|title=NVIDIA Launches the World's First Graphics Processing Unit: GeForce 256|url=https://www.nvidia.com/object/IO_20020111_5424.html|publisher=Nvidia|access-date=28 March 2016|date=31 August 1999|url-status=live|archive-url=https://web.archive.org/web/20160412035751/https://www.nvidia.com/object/IO_20020111_5424.html|archive-date=12 April 2016}} It was presented as a "single-chip [[Processor (computing)|processor]] with integrated [[Transform, clipping, and lighting|transform, lighting, triangle setup/clipping]], and rendering engines".{{cite web|title=Graphics Processing Unit (GPU)|date=16 December 2009|url=https://www.nvidia.com/object/gpu.html|publisher=Nvidia|access-date=29 March 2016|url-status=live|archive-url=https://web.archive.org/web/20160408122443/https://www.nvidia.com/object/gpu.html|archive-date=8 April 2016}} Rival [[ATI Technologies]] coined the term "visual processing unit" (VPU) with the release of the [[R300|Radeon 9700]] in 2002.{{cite web|last1=Pabst|first1=Thomas|title=ATi Takes Over 3D Technology Leadership With Radeon 9700|url=https://www.tomshardware.com/reviews/ati-takes-3d-technology-leadership-radeon-9700,491-3.html|publisher=Tom's Hardware|access-date=29 March 2016|date=18 July 2002}} The [[AMD]] Alveo MA35D features dual VPUs, each using the [[5 nm process]] in 2023.{{cite web| title=AMD Rolls Out 5 nm ASIC-based Accelerator for the Interactive Streaming Era| author=Child, J.| url=https://www.allaboutcircuits.com/news/amd-rolls-out-5-nm-asic-based-accelerator-for-the-interactive-streaming-era| publisher=EETech Media| date=6 April 2023| access-date=24 December 2023}}

In personal computers, there are two main forms of GPUs: dedicated graphics (also called discrete graphics) and integrated graphics (also called shared graphics solutions, integrated graphics processors (IGP), or unified memory architecture (UMA)).{{cite web|title=Help Me Choose: Video Cards |url=https://www.dell.com/learn/us/en/19/help-me-choose/hmc-video-card-inspiron-lt |archive-url=https://archive.today/20160909115302/http://www.dell.com/learn/us/en/19/help-me-choose/hmc-video-card-inspiron-lt?stp=1 |access-date=2016-09-17 |archive-date=2016-09-09 |publisher=[[Dell]] |url-status=dead }}

===Dedicated graphics processing unit=== {{see also|Video card}} ''Dedicated graphics processing units'' use [[random-access memory|RAM]] that is dedicated to the GPU rather than relying on the computer's main system memory. This RAM is usually specially selected for the expected serial workload of the graphics card, such as [[GDDR SDRAM]]. Sometimes systems with dedicated ''discrete'' GPUs were called "DIS" systems as opposed to "UMA" systems.{{cite web| title=Nvidia Optimus documentation for Linux device driver| url=https://nouveau.freedesktop.org/Optimus.html| publisher=freedesktop| date=13 November 2023| access-date=24 December 2023}}

Technologies such as [[Scan-Line Interleave]] by [[3dfx]], [[Scalable Link Interface]] (SLI) and [[NVLink]] by Nvidia and [[ATI CrossFire|CrossFire]] by AMD allow multiple GPUs to draw images simultaneously for a single screen, increasing the processing power available for graphics. These technologies, however, are increasingly uncommon; most games do not fully use multiple GPUs, as most users cannot afford them.{{cite web| title=Crossfire and SLI market is just 300.000 units| author=Abazovic, F.| url=https://www.fudzilla.com/news/graphics/38134-crossfire-and-sli-market-is-just-300-000-units| publisher=fudzilla| date=3 July 2015| access-date=24 December 2023}}{{Cite web | url=https://thetechaltar.com/is-multi-gpu-dead/ |title = Is Multi-GPU Dead?|date = 7 January 2018}}{{Cite web | url=https://www.techradar.com/news/nvidia-sli-and-amd-crossfire-is-dead-but-should-we-mourn-multi-gpu-gaming | title=Nvidia SLI and AMD CrossFire is dead – but should we mourn multi-GPU gaming? | TechRadar| date=24 August 2019}}{{better source needed|date=January 2026}} Multiple GPUs are still used on supercomputers (such as in [[Summit (supercomputer)|Summit]]); on workstations to accelerate video (processing multiple videos at once){{Cite web | url=https://devblogs.nvidia.com/nvidia-ffmpeg-transcoding-guide/ | title=NVIDIA FFmpeg Transcoding Guide| date=24 July 2019}}{{cite web |url=https://documents.blackmagicdesign.com/ConfigGuides/DaVinci_Resolve_15_Mac_Configuration_Guide.pdf |title=Hardware Selection and Configuration Guide DaVinci Resolve 15 |publisher=BlackMagic Design |date=2018 |access-date=31 May 2022}}{{Cite web|url=https://www.pugetsystems.com/recommended/Recommended-Systems-for-DaVinci-Resolve-187/Hardware-Recommendations|title=Recommended System: Recommended Systems for DaVinci Resolve|website=Puget Systems}}

-

{{Cite web | url=https://helpx.adobe.com/x-productkb/multi/gpu-acceleration-and-hardware-encoding.html |title = GPU Accelerated Rendering and Hardware Encoding}} and 3D rendering;{{Cite web |date=20 August 2019 |title=V-Ray Next Multi-GPU Performance Scaling |url=https://www.pugetsystems.com/labs/articles/V-Ray-Next-Multi-GPU-Performance-Scaling-1559/ }}

-

{{Cite web |title=FAQ | GPU-accelerated 3D rendering software | Redshift |url=https://www.redshift3d.com/support/faq |access-date=2020-03-03 |archive-date=2020-04-11 |archive-url=https://web.archive.org/web/20200411151101/https://www.redshift3d.com/support/faq |url-status=dead }}

-

{{Cite news |title=OctaneRender 2020™ Preview is here! |newspaper=Otoy |url=https://home.otoy.com/render/octane-render/faqs/ }}

-

{{Cite web |date=8 April 2019 |title=Exploring Performance with Autodesk's Arnold Renderer GPU Beta |url=https://techgage.com/article/autodesk-arnold-render-gpu-beta-performance/ }}

-

{{Cite web |title=GPU Rendering – Blender Manual |url=https://docs.blender.org/manual/en/latest/render/cycles/gpu_rendering.html }} for [[visual effects]] (VFX);{{Cite web | url=https://www.chaosgroup.com/vray/nuke | title=V-Ray for Nuke – Ray Traced Rendering for Compositors | Chaos Group}}

-

{{Cite web | url=https://www.foundry.com/products/nuke/requirements |title = System Requirements | Nuke | Foundry}} [[General-purpose computing on graphics processing units|general purpose graphics processing unit]] (GPGPU) workloads and for simulations,{{Cite web | url=https://foldingathome.org/faqs/gpu2-common/frequently-asked-questions-common-ati-nvidia-gpu2-clients-2/multi-gpu-support/ |title = What about multi-GPU support? – Folding@home}} and in AI to expedite training, as is the case with Nvidia's lineup of DGX workstations and servers, Tesla GPUs, and Intel's Ponte Vecchio GPUs.{{Citation needed|date=February 2026}}

==={{anchor|INTEGRATED|Integrated graphics|iGPU}}Integrated graphics processing unit=== {{stack begin}} [[File:Motherboard diagram.svg|thumb|upright=1|The position of an integrated GPU in a northbridge/southbridge system layout.]] [[File:A790GXH-128M-Motherboard.jpg|thumb|An [[ASRock]] motherboard with integrated graphics, which has HDMI, VGA and DVI-out ports.]] {{stack end}} ''Integrated graphics processing units'' (IGPU), also called ''integrated graphics'', ''shared graphics solutions'', ''integrated graphics processors'' (IGP), or ''unified memory architectures'' (UMA) use a portion of a computer's system RAM rather than dedicated graphics memory. IGPs can be integrated onto a motherboard as part of its [[Northbridge (computing)|northbridge]] chipset,{{Cite web|url=https://www.tomshardware.com/picturestory/693-intel-graphics-evolution.html|title = Evolution of Intel Graphics: I740 to Iris Pro|date = 4 February 2017}} or on the same [[die (integrated circuit)]] with the CPU, such as [[AMD APU|Accelerated Processing Unit]] (AMD APU) or [[Intel HD Graphics]]. On certain motherboards,{{cite web|url=https://www.gigabyte.com/products/product-page.aspx?pid=3785#ov|title=GA-890GPA-UD3H overview|url-status=dead|archive-url=https://web.archive.org/web/20150415095629/https://www.gigabyte.com/products/product-page.aspx?pid=3785#ov|archive-date=2015-04-15|access-date=2015-04-15|website=GIGABYTE}} AMD's IGPs can use dedicated sideport memory: a separate fixed block of high performance memory that is dedicated for use by the GPU. {{As of|2007|alt=As of early 2007}}, computers with integrated graphics account for about 90% of all PC shipments.{{cite web |author=Key |first=Gary |title=AnandTech – μATX Part 2: Intel G33 Performance Review |url=https://www.anandtech.com/mb/showdoc.aspx?i=3111&p=23 |url-status=dead |archive-url=https://web.archive.org/web/20080531045027/https://www.anandtech.com/mb/showdoc.aspx?i=3111&p=23 |archive-date=2008-05-31 |work=anandtech.com}}{{update inline|date=February 2013}} They are less costly to implement than dedicated graphics processing, but tend to be less capable. Historically, integrated processing was considered unfit for 3D games or graphically intensive programs but could run less intensive programs such as Adobe Flash. Examples of such IGPs would be offerings from SiS and VIA circa 2004.{{cite web |author=Tscheblockov |first=Tim |title=Xbit Labs: Roundup of 7 Contemporary Integrated Graphics Chipsets for Socket 478 and Socket A Platforms |url=https://www.xbitlabs.com/articles/chipsets/display/int-chipsets-roundup.html |url-status=dead |archive-url=https://web.archive.org/web/20070526124817/https://www.xbitlabs.com/articles/chipsets/display/int-chipsets-roundup.html |archive-date=2007-05-26 |access-date=2007-06-03 |website=X-bit labs}} However, modern integrated graphics processors such as [[AMD Accelerated Processing Unit]] and [[Intel Graphics Technology]], such as HD, UHD, Iris, Iris Pro, Iris Plus, and [[Intel Xe#Xe-LP (Low Power)|Xe-LP]], can handle 2D graphics or low-stress 3D graphics.{{Citation needed|date=February 2026}}

Because GPU computations are memory-intensive, integrated processing may compete with the CPU for relatively slow system RAM, as it has minimal or no dedicated video memory. IGPs use system memory with bandwidth up to a current maximum of 128 gigabytes per second, whereas a discrete graphics card may have a bandwidth{{cite web | title=GPU Memory Bandwidth Evolution 2007-2025: NVIDIA AMD Intel | url=https://gpus.axiomgaming.net/memory-bandwidth-statistics | website=Axiom Gaming | access-date=17 August 2025}} of more than 1000 gigabytes per second between its [[video random access memory]] (VRAM) and GPU core. This [[memory bus]] bandwidth can limit the performance of the GPU, though [[Multi-channel memory architecture|multi-channel memory]] can mitigate this deficiency.{{cite web |last1=Coelho |first1=Rafael |title=Does dual-channel memory make difference on integrated video performance? |url=https://www.hardwaresecrets.com/dual-channel-memory-make-difference-integrated-video-performance/ |website=Hardware Secrets |access-date=4 January 2019 |date=18 January 2016}} Older integrated graphics chipsets lacked hardware [[Transform, clipping, and lighting|transform and lighting]], but newer ones include it.{{cite web |author=Sanford |first=Bradley |title=Integrated Graphics Solutions for Graphics-Intensive Applications |url=https://www.amd.com/us-en/assets/content_type/white_papers_and_tech_docs/Integrated_Graphics_Solutions_white_paper_rev61.pdf |url-status=live |archive-url=https://web.archive.org/web/20071128165723/https://www.amd.com/us-en/assets/content_type/white_papers_and_tech_docs/Integrated_Graphics_Solutions_white_paper_rev61.pdf |archive-date=2007-11-28 |access-date=2007-09-02 |website=AMD}}{{cite web |author=Sanford |first=Bradley |title=Integrated Graphics Solutions for Graphics-Intensive Applications |url=https://www.techspot.com/news/46773-amd-announces-radeon-hd-7970-claims-fastest-gpu-title.html |url-status=live |archive-url=https://web.archive.org/web/20120107200529/https://www.techspot.com/news/46773-amd-announces-radeon-hd-7970-claims-fastest-gpu-title.html |archive-date=2012-01-07 |access-date=2007-09-02 |website=TechSpot}}

On systems with "Unified Memory Architecture" (UMA), including modern AMD processors with integrated graphics,{{Cite web |last=Shimpi |first=Anand Lal |title=AMD Outlines HSA Roadmap: Unified Memory for CPU/GPU in 2013, HSA GPUs in 2014 |url=https://www.anandtech.com/show/5493/amd-outlines-hsa-roadmap-unified-memory-for-cpugpu-in-2013-hsa-gpus-in-2014 |archive-url=https://web.archive.org/web/20120204102111/http://www.anandtech.com/show/5493/amd-outlines-hsa-roadmap-unified-memory-for-cpugpu-in-2013-hsa-gpus-in-2014 |url-status=dead |archive-date=February 4, 2012 |access-date=2024-01-08 |website=www.anandtech.com}} modern Intel processors with integrated graphics,{{Cite web |last=Lake |first=Adam T. |title=Getting the Most from OpenCL™ 1.2: How to Increase Performance by... |url=https://www.intel.com/content/www/us/en/developer/articles/training/getting-the-most-from-opencl-12-how-to-increase-performance-by-minimizing-buffer-copies-on-intel-processor-graphics.html |access-date=2024-01-08 |website=Intel |language=en}} Apple processors, the PS5 and Xbox Series (among others), the CPU cores and the GPU block share the same pool of RAM and memory address space.{{Citation needed|date=February 2026}}

==={{Anchor|SP-GPGPU}}Stream processing and general purpose GPUs (GPGPU)=== {{Main|GPGPU|Stream processing}}

It is common to use a [[GPGPU|general purpose graphics processing unit]] (GPGPU) as a modified form of [[stream processing|stream processor]] or a [[vector processor]], running [[compute kernel]]s. This turns the massive computational power of a modern graphics accelerator's shader pipeline into general-purpose computing power. In certain applications requiring massive vector operations, this can yield several orders of magnitude higher performance than a conventional CPU. The two largest discrete GPU designers, [[AMD]] and [[Nvidia]], are pursuing this approach with an array of applications.{{as of?|date=January 2026}} Nvidia and AMD collaborated with [[Stanford University]] to create a GPU-based client for the [[Folding@home]] distributed computing project for protein folding calculations. In certain circumstances, the GPU calculates forty times faster than the CPUs traditionally used by such applications.{{cite web |author=Murph |first=Darren |title=Stanford University tailors Folding@home to GPUs |date=29 September 2006 |url=https://www.engadget.com/2006/09/29/stanford-university-tailors-folding-home-to-gpus/ |url-status=live |archive-url=https://web.archive.org/web/20071012000648/https://www.engadget.com/2006/09/29/stanford-university-tailors-folding-home-to-gpus/ |archive-date=2007-10-12 |access-date=2007-10-04}}{{cite web |author=Houston |first=Mike |title=Folding@Home – GPGPU |url=https://graphics.stanford.edu/~mhouston/ |url-status=live |archive-url=https://web.archive.org/web/20071027130116/https://graphics.stanford.edu/~mhouston/ |archive-date=2007-10-27 |access-date=2007-10-04}}

GPU-based high performance computers play a significant role in large-scale modelling. Three of the ten most powerful supercomputers in the world take advantage of GPU acceleration.{{cite web |title=Top500 List – June 2012 | TOP500 Supercomputer Sites |url=https://www.top500.org/list/2012/06/100 |url-status=dead |archive-url=https://web.archive.org/web/20140113044747/https://www.top500.org/list/2012/06/100/ |archive-date=2014-01-13 |access-date=2014-01-21 |publisher=Top500.org}}{{Update inline|date=January 2026}}

Since 2005 there has been interest in using the performance offered by GPUs for [[evolutionary computation]] in general, and for accelerating the [[Fitness (genetic algorithm)|fitness]] evaluation in [[genetic programming]] in particular.{{citation needed|date=January 2026}} Most approaches compile [[linear genetic programming|linear]] or [[genetic programming|tree programs]] on the host PC and transfer the executable to the GPU to be run. Typically a performance advantage is only obtained by running the single active program simultaneously on many example problems in parallel, using the GPU's [[single instruction, multiple data]] (SIMD) architecture.{{cite web |author=Nickolls |first=John |title=Stanford Lecture: Scalable Parallel Programming with CUDA on Manycore GPUs |url=https://www.youtube.com/watch?v=nlGnKPpOpbE |url-status=live |archive-url=https://web.archive.org/web/20161011195103/https://www.youtube.com/watch?v=nlGnKPpOpbE |archive-date=2016-10-11 |website=[[YouTube]]|date=July 2008}}

*{{cite web |author=Harding |first1=S. |last2=Banzhaf |first2=W. |title=Fast genetic programming on GPUs |url=https://www.cs.bham.ac.uk/~wbl/biblio/gp-html/eurogp07_harding.html |url-status=live |archive-url=https://web.archive.org/web/20080609231021/https://www.cs.bham.ac.uk/~wbl/biblio/gp-html/eurogp07_harding.html |archive-date=2008-06-09 |access-date=2008-05-01}}{{needs update|date=January 2026}} Substantial acceleration can also be obtained by not compiling the programs, and instead transferring them to the GPU, to be interpreted there.{{cite web |author=Langdon |first1=W. |last2=Banzhaf |first2=W. |title=A SIMD interpreter for Genetic Programming on GPU Graphics Cards |url=https://www.cs.bham.ac.uk/~wbl/biblio/gp-html/langdon_2008_eurogp.html |url-status=live |archive-url=https://web.archive.org/web/20080609231026/https://www.cs.bham.ac.uk/~wbl/biblio/gp-html/langdon_2008_eurogp.html |archive-date=2008-06-09 |access-date=2008-05-01}}

*V. Garcia and E. Debreuve and M. Barlaud. [[arxiv:0804.1448|Fast k nearest neighbor search using GPU]]. In Proceedings of the CVPR Workshop on Computer Vision on GPU, Anchorage, Alaska, June 2008.

===External GPU (eGPU)=== A GPU can be attached to some external bus of a notebook. [[PCI Express]] is the only bus used for this purpose.{{as of?|date=January 2026}} The port may be, for example, an [[ExpressCard]] or [[PCI Express#PCI Express Mini Card|mPCIe]] port (PCIe ×1, up to 5 or 2.5 gigabits per second respectively), a [[Thunderbolt (interface)|Thunderbolt]] 1, 2, or 3 port (PCIe ×4, up to 10, 20, or 40 gigabits per second respectively), a [[Thunderbolt_(interface)#USB4|USB4 port with Thunderbolt compatibility]], or an [[OCuLink]] port. Those ports are only available on certain notebook systems.{{cite web |author=Mohr |first=Neil |title=How to make an external laptop graphics adaptor |url=https://www.techradar.com/news/computing-components/graphics-cards/how-to-make-an-external-laptop-graphics-adaptor-915616 |url-status=live |archive-url=https://web.archive.org/web/20170626052950/https://www.techradar.com/news/computing-components/graphics-cards/how-to-make-an-external-laptop-graphics-adaptor-915616 |archive-date=2017-06-26 |work=TechRadar}} eGPU enclosures include their own power supply (PSU), because powerful GPUs can consume hundreds of watts.{{Cite web | url=https://www.gamingscan.com/best-external-graphics-card/ |title = Best External Graphics Card 2020 (EGPU) [The Complete Guide]|date = 16 March 2020}}

==Energy efficiency== {{excerpt|Performance per watt|GPU efficiency}}

==Sales== In 2013, 438.3 million GPUs were shipped globally and the forecast for 2014 was 414.2 million. However, by the third quarter of 2022, shipments of PC GPUs totaled around 75.5 million units, down 19% year-over-year.{{Cite web |date=2022-11-20 |title=GPU Q3'22 biggest quarter-to-quarter drop since the 2009 recession |url=https://www.jonpeddie.com/news/q322-biggest-qtr-to-qtr-drop-since-the-2009-recession/ |access-date=2023-06-06 |website=Jon Peddie Research |language=en-US}}{{update inline|date=April 2023}}{{cite web|url=https://www.tgdaily.com/enterprise/126591-graphics-chips-market-is-showing-some-life|title=Graphics chips market is showing some life|date=August 20, 2014|publisher=TG Daily|access-date=August 22, 2014|url-status=live|archive-url=https://web.archive.org/web/20140826115733/https://www.tgdaily.com/enterprise/126591-graphics-chips-market-is-showing-some-life|archive-date=August 26, 2014}}

==See also== {{Div col|colwidth=25em}}

- [[UALink]]

- [[Texture mapping unit]] (TMU)

- [[Render output unit]] (ROP)

- [[Brute force attack]]

- [[Computer hardware]]

- [[Computer monitor]]

- [[GPU cache]]

- [[GPU virtualization]]

- [[Manycore processor]]

- [[Physics processing unit]] (PPU)

- [[Tensor processing unit]] (TPU)

- [[Ray-tracing hardware]]

- [[Single instruction, multiple threads]] (SIMT)

- [[Software rendering]]

- [[Vision processing unit]] (VPU)

- [[Vector processor]]

- [[Video card]]

- [[Video display controller]]

- [[Video game console]]

- [[AI accelerator]]

- [[Vector processor#GPU vector processing features|GPU Vector Processor internal features]] {{div col end}}

===Hardware===

- [[List of AMD graphics processing units]]

- [[List of Nvidia graphics processing units]]

- [[List of Intel graphics processing units]]

- [[List of discrete and integrated graphics processing units]]

- [[Intel GMA]]

- [[Larrabee (microarchitecture)|Larrabee]]

- [[Nvidia PureVideo]] – the bit-stream technology from [[Nvidia]] used in their graphics chips to accelerate video decoding on hardware GPU with DXVA.

- [[System on a chip|SoC]]

- [[Unified Video Decoder|UVD (Unified Video Decoder)]] – the video decoding bit-stream technology from ATI to support hardware (GPU) decode with DXVA

===APIs=== {{Div col|colwidth=30em}}

- [[OpenGL|OpenGL API]]

- [[OpenCL|OpenCL API]]

- [[OpenVX|OpenVX API]]

- [[TensorFlow Lite]]

- [[Mantle (API)]]

- [[Metal (API)]] ** [[Core ML]]

- [[Vulkan (API)]]

- [[Direct3D]] ** [[DirectX Video Acceleration|DirectX Video Acceleration (DxVA) API]] for [[Microsoft Windows]] operating-system. ** [[DirectML]]

- [[Direct2D]] ** [[DirectDraw]] ** [[DirectWrite]]

- [[Video Acceleration API|Video Acceleration API (VA API)]]

- [[VDPAU|VDPAU (Video Decode and Presentation API for Unix)]]

- [[X-Video Bitstream Acceleration|X-Video Bitstream Acceleration (XvBA)]], the X11 equivalent of DXVA for MPEG-2, H.264, and VC-1

- [[X-Video Motion Compensation]] – the X11 equivalent for MPEG-2 video codec only {{div col end}}

===Applications===

- [[GPU cluster]]

- [[Mathematica]] – includes built-in support for CUDA and OpenCL GPU execution

- [[Molecular modeling on GPU]]

- [[Deeplearning4j]] – open-source, distributed deep learning for Java

==References== {{Reflist|30em}}

== Sources ==

- {{cite book |author=Peddie |date=1 January 2023 |title=The History of the GPU – New Developments |publisher=Springer Nature |isbn=978-3-03-114047-1 |oclc=1356877844 |first=Jon |url=https://books.google.com/books?id=vfKkEAAAQBAJ}}

==External links== {{Commons category|Graphics processing units}}

{{Div col|colwidth=25em}}

- [https://www.nvidia.com/object/what-is-gpu-computing.html NVIDIA – What is GPU computing?]

- The [https://web.archive.org/web/20130605124431/https://developer.nvidia.com/content/gpu-gems ''GPU Gems'' book series]

- [https://titancity.com/articles/gfxcards.html – A Graphics Hardware History] {{Webarchive|url=https://web.archive.org/web/20220331004621/http://titancity.com/articles/gfxcards.html |date=2022-03-31 }}{{Dead link|date=September 2025|bot=|fix-attempted=}}

- [https://web.archive.org/web/20161227132101/https://www.cs.virginia.edu/~gfx/papers/paper.php?paper_id=59 How GPUs work]

- [https://www.ozone3d.net/gpu_caps_viewer/ GPU Caps Viewer – Video card information utility]

- [https://web.archive.org/web/20130114054853/https://malideveloper.arm.com/learn-about-mali/about-mali/arm-mali-gpus/ ARM Mali GPUs Overview] {{div col end}}

{{Authority control}} {{Graphics Processing Unit}} {{Hardware acceleration}}

{{DEFAULTSORT:Graphics Processing Unit}} [[Category:Graphics processing units| ]] [[Category:GPGPU libraries]] [[Category:Graphics hardware]] [[Category:Virtual reality]] [[Category:OpenCL compute devices]] [[Category:Artificial intelligence]] [[Category:Application-specific integrated circuits]] [[Category:Hardware acceleration]] [[Category:Digital electronics]] [[Category:Electronic design]] [[Category:Electronic design automation]]

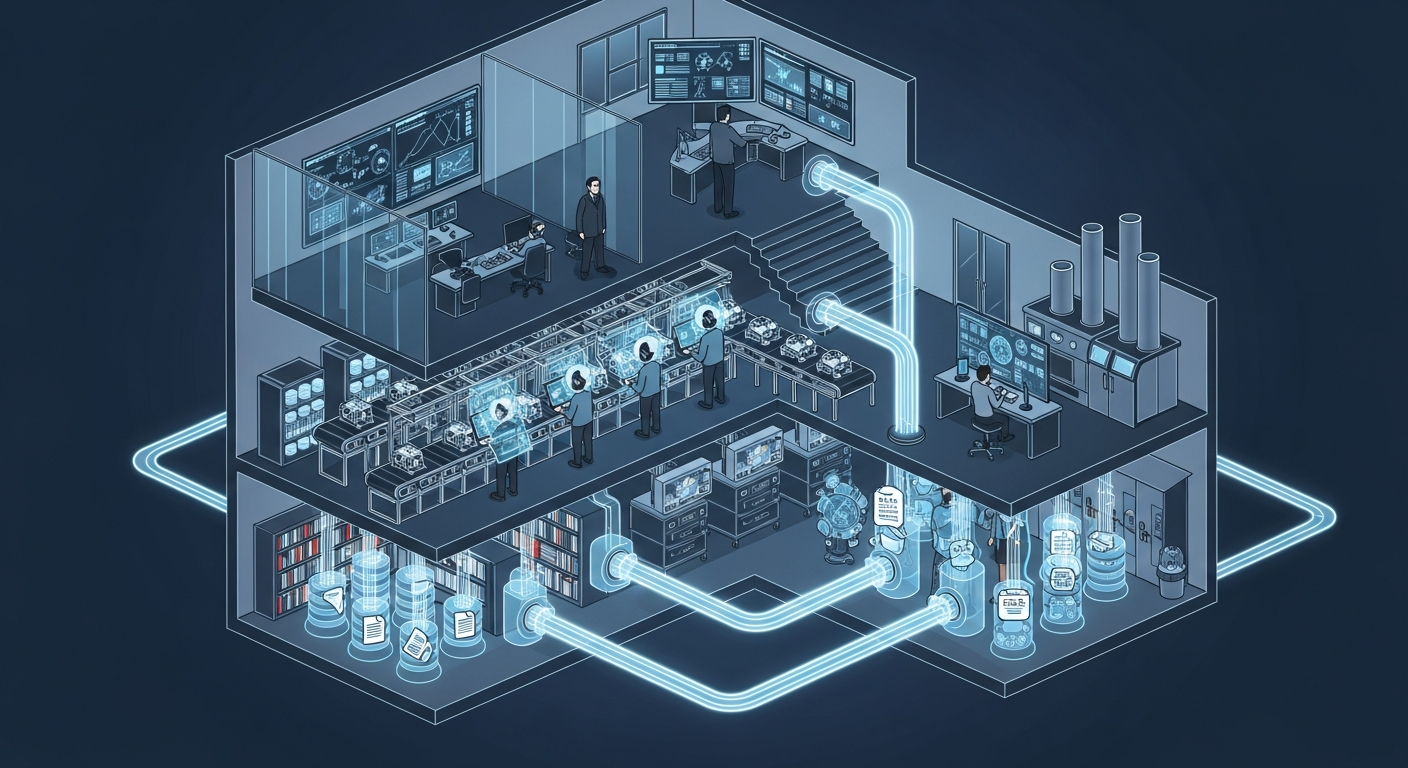

From MOAI Insights

공장의 뇌는 어떻게 생겼는가 — 제조운영 AI 아키텍처 해부

지식관리, 업무자동화, 의사결정지원 — 따로 보면 다 있던 것들입니다. 제조 AI의 진짜 차이는 이 셋이 순환하면서 '우리 공장만의 지능'을 만든다는 데 있습니다.

그 30분을 18년 동안 매일 반복했습니다 — 품질팀장이 본 AI Agent

18년차 품질팀장이 매일 아침 30분씩 반복하던 데이터 분석을 AI Agent가 3분 만에 해냈습니다. 챗봇과는 완전히 다른 물건 — 직접 시스템에 접근해서 데이터를 꺼내고 분석하는 AI의 현장 도입기.

ERP 20년, 나는 왜 AI를 얹기로 했나

ERP 20년차 제조IT본부장의 고백: 3,200만 행의 데이터가 잠들어 있었다. ERP를 바꾸지 않고 AI를 얹자, 일주일 걸리던 불량 분석이 수 초로 줄었다.

Want to apply this in your factory?

MOAI helps manufacturing companies adopt AI tailored to their operations.

Talk to us →