Loss function

{{Short description|Mathematical relation assigning a probability event to a cost}} In [[mathematical optimization]] and [[decision theory]], a '''loss function''' or '''cost function''' (sometimes also called an error function){{cite book|first1=Trevor |last1=Hastie |authorlink1= |first2=Robert |last2=Tibshirani |authorlink2=Robert Tibshirani|first3=Jerome H. |last3=Friedman |authorlink3=Jerome H. Friedman |title=The Elements of Statistical Learning |publisher=Springer |year=2001 |isbn=0-387-95284-5 |page=18 |url=https://web.stanford.edu/~hastie/ElemStatLearn/}} is a function that maps an [[event (probability theory)|event]] or values of one or more variables onto a [[real number]] intuitively representing some "cost" associated with the event. An [[optimization problem]] seeks to minimize a loss function. An '''objective function''' is either a loss function or its opposite (in specific domains, variously called a [[reward function]], a [[profit function]], a [[utility function]], a [[fitness function]], etc.), in which case it is to be maximized. The loss function could include terms from several levels of the hierarchy.

In statistics, typically a loss function is used for [[parameter estimation]], and the event in question is some function of the difference between estimated and true values for an instance of data. The concept, as old as [[Pierre-Simon Laplace|Laplace]], was reintroduced in statistics by [[Abraham Wald]] in the middle of the 20th century.{{cite book |first=A. |last=Wald |title=Statistical Decision Functions |via=APA Psycnet |publisher=Wiley |year=1950 |url=https://psycnet.apa.org/record/1951-01400-000}} In the context of [[economics]], for example, this is usually [[economic cost]] or [[Regret (decision theory)|regret]]. In [[Statistical classification|classification]], it is the penalty for an incorrect classification of an example. In [[actuarial science]], it is used in an insurance context to model benefits paid over premiums, particularly since the works of [[Harald Cramér]] in the 1920s.{{cite book |last=Cramér |first=H. |year=1930 |title=On the mathematical theory of risk |publisher=Centraltryckeriet }} In [[optimal control]], the loss is the penalty for failing to achieve a desired value. In [[financial risk management]], the function is mapped to a monetary loss. [[File:Comparison of loss functions.png|thumb|Comparison of common loss functions ([[Mean absolute error|MAE]], SMAE, [[Huber loss]], and log-cosh loss) used for regression]]

==Examples==

===Regret=== {{main|Regret (decision theory)}} [[Leonard J. Savage]] argued that using non-Bayesian methods such as [[minimax]], the loss function should be based on the idea of ''[[regret (decision theory)|regret]]'', i.e., the loss associated with a decision should be the difference between the consequences of the best decision that could have been made under circumstances will be known and the decision that was in fact taken before they were known.

===Quadratic loss function===

The use of a [[quadratic function|quadratic]] loss function is common, for example when using [[least squares]] techniques. It is often more mathematically tractable than other loss functions because of the properties of [[variance]]s, as well as being symmetric: an error above the target causes the same loss as the same magnitude of error below the target. If the target is ''t'', then a quadratic loss function is :\lambda(x) = C (t-x)^2 ; for some constant ''C''; the value of the constant makes no difference to a decision, and can be ignored by setting it equal to 1. This is also known as the '''squared error loss''' ('''SEL''').

Many common [[statistic]]s, including [[t-test]]s, [[Regression analysis|regression]] models, [[design of experiments]], and much else, use [[least squares]] methods applied using [[linear regression]] theory, which is based on the quadratic loss function.

The quadratic loss function is also used in [[Linear-quadratic regulator|linear-quadratic optimal control problems]]. In these problems, even in the absence of uncertainty, it may not be possible to achieve the desired values of all target variables. Often loss is expressed as a [[quadratic form]] in the deviations of the variables of interest from their desired values; this approach is [[closed-form expression|tractable]] because it results in linear [[first-order condition]]s. In the context of [[stochastic control]], the expected value of the quadratic form is used. The quadratic loss assigns more importance to outliers than to the true data due to its square nature, so alternatives like the [[Huber loss|Huber]], log-cosh and SMAE{{explain|reason=What is the SMAE loss?|date=February 2026}} losses are used when the data has many large outliers. [[File:Fitting a straight line to a data with outliers.png|thumb|Effect of using different loss functions, when the data has outliers]]

===0-1 loss function===

In [[statistics]] and [[decision theory]], a frequently used loss function is the ''0-1 loss function''

: L(\hat{y}, y) = \begin{cases} 0 & \text{if } y = \hat{y} \ 1 & \text{if } y \neq \hat{y} \end{cases}

In [[information theory]], this loss function is known as [[Rate–distortion_theory#Hamming_distortion|Hamming distortion]].

==Constructing loss and objective functions== {{See also|Scoring rule}} In many applications, objective functions, including loss functions as a particular case, are determined by the problem formulation. In other situations, the decision maker’s preference must be elicited and represented by a scalar-valued function (called also [[utility]] function) in a form suitable for optimization — the problem that [[Ragnar Frisch]] has highlighted in his [[Nobel Prize]] lecture.{{cite book| first=Ragnar|last=Frisch|date=1969 |title= The Nobel Prize–Prize Lecture|chapter=From utopian theory to practical applications: the case of econometrics|url=https://www.nobelprize.org/prizes/economic-sciences/1969/frisch/lecture/|access-date=15 February 2021}} The existing methods for constructing objective functions are collected in the proceedings of two dedicated conferences.{{Cite book |last1=Tangian |first1=Andranik |last2=Gruber |first2=Josef |date=1997 |title= Constructing Scalar-Valued Objective Functions. Proceedings of the Third International Conference on Econometric Decision Models: Constructing Scalar-Valued Objective Functions, University of Hagen, held in Katholische Akademie Schwerte September 5–8, 1995|series= Lecture Notes in Economics and Mathematical Systems |volume=453|isbn= 978-3-540-63061-6 |doi= 10.1007/978-3-642-48773-6 |publisher=Springer |location=Berlin }}{{Cite book |last1=Tangian |first1=Andranik |last2=Gruber |first2=Josef |date=2002 |title= Constructing and Applying Objective Functions. Proceedings of the Fourth International Conference on Econometric Decision Models Constructing and Applying Objective Functions, University of Hagen, held in Haus Nordhelle, August, 28 — 31, 2000 |series= Lecture Notes in Economics and Mathematical Systems |volume=510 |publisher=Springer |location=Berlin|isbn= 978-3-540-42669-1 |doi= 10.1007/978-3-642-56038-5 }} In particular, [[Andranik Tangian]] showed that the most usable objective functions — quadratic and additive — are determined by a few [[Principle of indifference|indifference]] points. He used this property in the models for constructing these objective functions from either [[ordinal utility|ordinal]] or [[cardinal utility|cardinal]] data that were elicited through computer-assisted interviews with decision makers.{{Cite journal|last=Tangian |first=Andranik |year=2002|title= Constructing a quasi-concave quadratic objective function from interviewing a decision maker|journal= European Journal of Operational Research |volume=141 |issue=3 |pages=608–640 |doi=10.1016/S0377-2217(01)00185-0 |s2cid= 39623350 }}{{Cite journal|last=Tangian |first=Andranik |year=2004|title= A model for ordinally constructing additive objective functions|journal= European Journal of Operational Research |volume=159 |issue=2 |pages=476–512|doi = 10.1016/S0377-2217(03)00413-2 | s2cid= 31019036 }} Among other things, he constructed objective functions to optimally distribute budgets for 16 Westfalian universities{{Cite journal |last=Tangian |first=Andranik |year=2004 |title= Redistribution of university budgets with respect to the status quo |journal= European Journal of Operational Research |volume=157 |issue=2 |pages=409–428|doi = 10.1016/S0377-2217(03)00271-6 }} and the European subsidies for equalizing unemployment rates among 271 German regions.{{Cite journal|last=Tangian |first=Andranik |year=2008 |title= Multi-criteria optimization of regional employment policy: A simulation analysis for Germany |journal= Review of Urban and Regional Development |volume=20 |issue=2|pages=103–122 |url= https://onlinelibrary.wiley.com/doi/10.1111/j.1467-940X.2008.00144.x |doi = 10.1111/j.1467-940X.2008.00144.x |url-access=subscription }}

==Expected loss== {{See also|Empirical risk minimization}} In some contexts, the value of the loss function itself is a random quantity because it depends on the outcome of a random variable ''X''.

===Statistics=== Both [[Frequentist probability|frequentist]] and [[Bayesian probability|Bayesian]] statistical theory involve making a decision based on the [[expected value]] of the loss function; however, this quantity is defined differently under the two paradigms.

====Frequentist expected loss==== We first define the expected loss in the frequentist context. It is obtained by taking the expected value with respect to the [[probability distribution]], ''P''''θ'', of the observed data, ''X''. This is also referred to as the '''risk function'''{{SpringerEOM| title=Risk of a statistical procedure |id=R/r082490 |first=M.S. |last=Nikulin}} {{cite book |title=Statistical decision theory and Bayesian Analysis |first=James O. |last=Berger |author-link=James Berger (statistician) |year=1985 |edition=2nd |publisher=Springer-Verlag |location=New York |isbn=978-0-387-96098-2 |mr=0804611 |url=https://books.google.com/books?id=oY_x7dE15_AC |bibcode=1985sdtb.book.....B }}{{cite book |first=Morris |last=DeGroot |author-link=Morris H. DeGroot |title=Optimal Statistical Decisions |publisher=Wiley Classics Library |year=2004 |orig-year=1970 |isbn=978-0-471-68029-1 |mr=2288194 }}{{cite book |last=Robert |first=Christian P. |title=The Bayesian Choice |publisher=Springer |location=New York |year=2007|edition=2nd |doi=10.1007/0-387-71599-1 |isbn=978-0-387-95231-4 |mr=1835885 |series=Springer Texts in Statistics }} of the decision rule ''δ'' and the parameter ''θ''. Here the decision rule depends on the outcome of ''X''. The risk function is given by:

: R(\theta, \delta) = \operatorname{E}\theta L\big( \theta, \delta(X) \big) = \int_X L\big( \theta, \delta(x) \big) , \mathrm{d} P\theta (x) .

Here, ''θ'' is a fixed but possibly unknown state of nature, ''X'' is a vector of observations stochastically drawn from a [[Statistical population|population]], \operatorname{E}_\theta is the expectation over all population values of ''X'', ''dP''''θ'' is a [[probability measure]] over the event space of ''X'' (parametrized by ''θ'') and the integral is evaluated over the entire [[Support (measure theory)|support]] of ''X''.

====Bayes Risk ==== In a Bayesian approach, the expectation is calculated using the [[prior distribution]] {{pi}}* of the parameter ''θ'':

:\rho(\pi^,a) = \int_\Theta \int _{\bold X} L(\theta, a(\bold x)) , \mathrm{d} P(\bold x \vert \theta) ,\mathrm{d} \pi^ (\theta)= \int_{\bold X} \int_\Theta L(\theta,a(\bold x)),\mathrm{d} \pi^*(\theta\vert \bold x),\mathrm{d}M(\bold x)

where m(x) is known as the ''predictive likelihood'' wherein θ has been "integrated out," {{pi}} (θ | x) is the posterior distribution, and the order of integration has been changed. One then should choose the action ''a'' which minimises this expected loss, which is referred to as ''Bayes Risk''.

In the latter equation, the integrand inside dx is known as the ''Posterior Risk'', and minimising it with respect to decision ''a'' also minimizes the overall Bayes Risk. This optimal decision, ''a*'' is known as the ''Bayes (decision) Rule'' - it minimises the average loss over all possible states of nature θ, over all possible (probability-weighted) data outcomes. One advantage of the Bayesian approach is to that one need only choose the optimal action under the actual observed data to obtain a uniformly optimal one, whereas choosing the actual frequentist optimal decision rule as a function of all possible observations, is a much more difficult problem. Of equal importance though, the Bayes Rule reflects consideration of loss outcomes under different states of nature, θ.

====Examples in statistics====

- For a scalar parameter ''θ'', a decision function whose output \hat\theta is an estimate of ''θ'', and a quadratic loss function ([[squared error loss]]) L(\theta,\hat\theta)=(\theta-\hat\theta)^2, the risk function becomes the [[mean squared error]] of the estimate, R(\theta,\hat\theta)= \operatorname{E}_\theta \left [ (\theta-\hat\theta)^2 \right ].An [[Estimator]] found by minimizing the [[Mean squared error]] estimates the [[Posterior distribution]]'s mean.

- In [[density estimation]], the unknown parameter is [[probability density function|probability density]] itself. The loss function is typically chosen to be a [[Norm (mathematics)|norm]] in an appropriate [[function space]]. For example, for [[L2 norm|''L''2 norm]], L(f,\hat f) = |f-\hat f|_2^2,, the risk function becomes the [[mean integrated squared error]] R(f,\hat f)=\operatorname{E} \left ( |f-\hat f|^2 \right ).,

===Economic choice under uncertainty===

In economics, decision-making under uncertainty is often modelled using the [[von Neumann–Morgenstern utility function]] of the uncertain variable of interest, such as end-of-period wealth. Since the value of this variable is uncertain, so is the value of the utility function; it is the expected value of utility that is maximized.

==Decision rules== A [[decision rule]] makes a choice using an optimality criterion. Some commonly used criteria are:

*'''[[Minimax]]''': Choose the decision rule with the lowest worst loss — that is, minimize the worst-case (maximum possible) loss: \underset{\delta} {\operatorname{arg,min}} \ \max_{\theta \in \Theta} \ R(\theta,\delta). *'''[[Invariant estimator|Invariance]]''': Choose the decision rule which satisfies an invariance requirement. *Choose the decision rule with the lowest average loss (i.e. minimize the [[expected value]] of the loss function): \underset{\delta} {\operatorname{arg,min}} \operatorname{E}{\theta \in \Theta} [R(\theta,\delta)] = \underset{\delta} {\operatorname{arg,min}} \ \int{\theta \in \Theta} R(\theta,\delta) , p(\theta) ,d\theta.

==Selecting a loss function== Sound statistical practice requires selecting an estimator consistent with the actual acceptable variation experienced in the context of a particular applied problem. Thus, in the applied use of loss functions, selecting which statistical method to use to model an applied problem depends on knowing the losses that will be experienced from being wrong under the problem's particular circumstances.{{cite book |last=Pfanzagl |first=J. |year=1994 |title=Parametric Statistical Theory |location=Berlin |publisher=Walter de Gruyter |isbn=978-3-11-013863-4 }}

A common example involves estimating "[[location parameter|location]]". Under typical statistical assumptions, the [[mean]] or average is the statistic for estimating location that minimizes the expected loss experienced under the [[least squares|squared-error]] loss function, while the [[median]] is the estimator that minimizes expected loss experienced under the absolute-difference loss function. Still different estimators would be optimal under other, less common circumstances.

In economics, when an agent is [[risk neutral]], the objective function is simply expressed as the expected value of a monetary quantity, such as profit, income, or end-of-period wealth. For [[Risk aversion|risk-averse]] or [[risk-loving]] agents, loss is measured as the negative of a [[utility|utility function]], and the objective function to be optimized is the expected value of utility.

Other measures of cost are possible, for example [[Mortality rate|mortality]] or [[morbidity]] in the field of [[public health]] or [[safety engineering]].

For most [[optimization algorithm]]s, it is desirable to have a loss function that is globally [[Continuous function|continuous]] and [[Differentiable function|differentiable]].

Two very commonly used loss functions are the [[mean squared error|squared loss]], L(a) = a^2, and the [[absolute deviation|absolute loss]], L(a)=|a|. However the absolute loss has the disadvantage that it is not differentiable at a=0. The squared loss has the disadvantage that it has the tendency to be dominated by [[outlier]]s—when summing over a set of a's (as in \sum_{i=1}^n L(a_i) ), the final sum tends to be the result of a few particularly large ''a''-values, rather than an expression of the average ''a''-value.

The choice of a loss function is not arbitrary. It is very restrictive and sometimes the loss function may be characterized by its desirable properties.Detailed information on mathematical principles of the loss function choice is given in Chapter 2 of the book {{cite book|title=Robust and Non-Robust Models in Statistics|first1=B.|last1=Klebanov|first2=Svetlozat T.|last2=Rachev|first3=Frank J.|last3=Fabozzi|publisher=Nova Scientific Publishers, Inc.|location=New York|year=2009}} (and references there). Among the choice principles are, for example, the requirement of completeness of the class of symmetric statistics in the case of [[i.i.d.]] observations, the principle of complete information, and some others.

[[W. Edwards Deming]] and [[Nassim Nicholas Taleb]] argue that empirical reality, not nice mathematical properties, should be the sole basis for selecting loss functions, and real losses often are not mathematically nice and are not differentiable, continuous, symmetric, etc. For example, a person who arrives before a plane gate closure can still make the plane, but a person who arrives after cannot, a discontinuity and asymmetry which makes arriving slightly late much more costly than arriving slightly early. In drug dosing, the cost of too little drug may be lack of efficacy, while the cost of too much may be tolerable toxicity, another example of asymmetry. Traffic, pipes, beams, ecologies, climates, etc. may tolerate increased load or stress with little noticeable change up to a point, then become backed up or break catastrophically. These situations, Deming and Taleb argue, are common in real-life problems, perhaps more common than classical smooth, continuous, symmetric, differentials cases.{{Cite book|title=Out of the Crisis|last=Deming|first=W. Edwards|publisher=The MIT Press|year=2000|isbn=9780262541152}}

==See also== *[[Bayesian regret]] *[[Loss functions for classification]] *[[Discounted maximum loss]] *[[Hinge loss]] *[[Scoring rule]] *[[Statistical risk]]

==References== {{reflist}}

==Further reading== *{{cite journal |author2=Bartram, Söhnke M. |author3=Pope, Peter F. |date=April–June 2011 |title=Asymmetric Loss Functions and the Rationality of Expected Stock Returns |journal=International Journal of Forecasting |volume=27 |issue=2 |pages=413–437 |doi= 10.1016/j.ijforecast.2009.10.008|ssrn=889323 |last1= Aretz |first1=Kevin |url=https://mpra.ub.uni-muenchen.de/47343/1/MPRA_paper_47343.pdf }}

- {{cite book |title=Statistical decision theory and Bayesian Analysis |first=James O. |last=Berger |author-link=James Berger (statistician) |year=1985 |edition=2nd |publisher=Springer-Verlag |location=New York |isbn=978-0-387-96098-2 |mr=0804611 |bibcode=1985sdtb.book.....B }}

*{{cite journal|url=https://www.researchgate.net/publication/5216117|doi=10.1093/oxrep/16.4.43|title=Making monetary policy: Objectives and rules|journal=Oxford Review of Economic Policy|volume=16|issue=4|pages=43–59|year=2000|last1=Cecchetti|first1=S.}}

*{{cite journal|doi=10.1016/0164-0704(87)90016-4|title=Loss functions and public policy|journal=Journal of Macroeconomics|volume=9|issue=4|pages=489–504|year=1987|last1=Horowitz|first1=Ann R.}}

*{{cite journal|jstor=1911380|title=Asymmetric Policymaker Utility Functions and Optimal Policy under Uncertainty|journal=Econometrica|volume=44|issue=1|pages=53–66|last1=Waud|first1=Roger N.|year=1976|doi=10.2307/1911380}}

{{Statistics|inference|collapsed}} {{Differentiable computing}}

{{DEFAULTSORT:Loss Function}} [[Category:Optimal decisions]] [[Category:Loss functions|*]]

From MOAI Insights

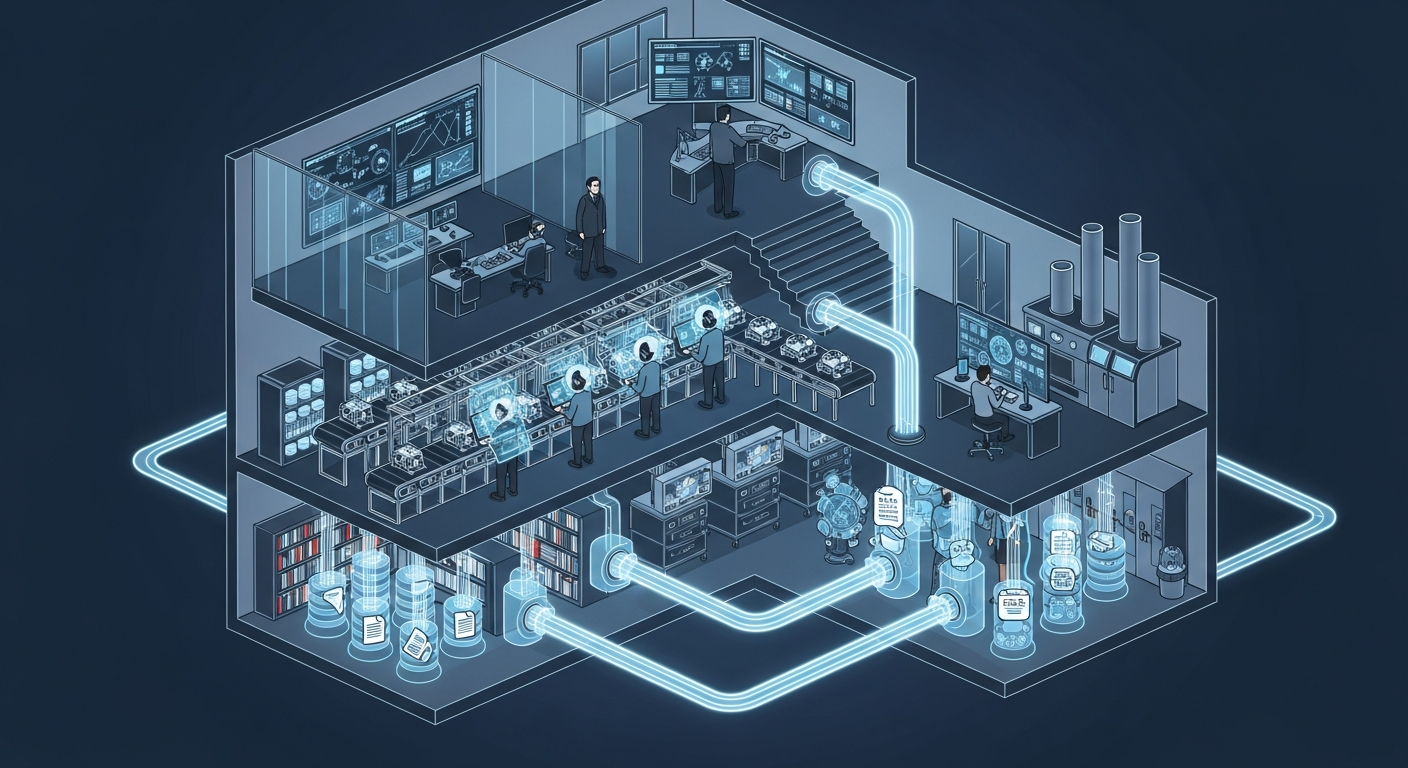

공장의 뇌는 어떻게 생겼는가 — 제조운영 AI 아키텍처 해부

지식관리, 업무자동화, 의사결정지원 — 따로 보면 다 있던 것들입니다. 제조 AI의 진짜 차이는 이 셋이 순환하면서 '우리 공장만의 지능'을 만든다는 데 있습니다.

그 30분을 18년 동안 매일 반복했습니다 — 품질팀장이 본 AI Agent

18년차 품질팀장이 매일 아침 30분씩 반복하던 데이터 분석을 AI Agent가 3분 만에 해냈습니다. 챗봇과는 완전히 다른 물건 — 직접 시스템에 접근해서 데이터를 꺼내고 분석하는 AI의 현장 도입기.

ERP 20년, 나는 왜 AI를 얹기로 했나

ERP 20년차 제조IT본부장의 고백: 3,200만 행의 데이터가 잠들어 있었다. ERP를 바꾸지 않고 AI를 얹자, 일주일 걸리던 불량 분석이 수 초로 줄었다.

Want to apply this in your factory?

MOAI helps manufacturing companies adopt AI tailored to their operations.

Talk to us →