Probabilistic reasoning

{{Short description|Applications of logic under uncertainty}} '''Probabilistic logic''' (also '''probability logic''' and '''probabilistic reasoning''') involves the use of probability and logic to deal with uncertain situations. Probabilistic logic extends traditional logic [[truth table]]s with probabilistic expressions. A difficulty of probabilistic logics is their tendency to multiply the [[computational complexities]] of their probabilistic and logical components. Other difficulties include the possibility of counter-intuitive results, such as in case of belief fusion in [[Dempster–Shafer theory]]. Source trust and epistemic uncertainty about the probabilities they provide, such as defined in [[subjective logic]], are additional elements to consider. The need to deal with a broad variety of contexts and issues has led to many different proposals.

==Logical background== There are numerous proposals for probabilistic logics. Very roughly, they can be categorized into two different classes: those logics that attempt to make a probabilistic extension to [[logical consequence|logical entailment]], such as [[Markov logic network]]s, and those that attempt to address the problems of uncertainty and lack of evidence (evidentiary logics).

That the concept of probability can have different meanings may be understood by noting that, despite the mathematization of probability in the [[Age of Enlightenment|Enlightenment]], mathematical [[probability theory]] remains, to this very day, entirely unused in criminal courtrooms, when evaluating the "probability" of the guilt of a suspected criminal.James Franklin, ''The Science of Conjecture: Evidence and Probability before Pascal'', 2001 The Johns Hopkins Press, {{isbn|0-8018-7109-3}}.

More precisely, in evidentiary logic, there is a need to distinguish the objective truth of a statement from our decision about the truth of that statement, which in turn must be distinguished from our confidence in its truth: thus, a suspect's real guilt is not necessarily the same as the judge's decision on guilt, which in turn is not the same as assigning a numerical probability to the commission of the crime, and deciding whether it is above a numerical threshold of guilt. The verdict on a single suspect may be guilty or not guilty with some uncertainty, just as the flipping of a coin may be predicted as heads or tails with some uncertainty. Given a large collection of suspects, a certain percentage may be guilty, just as the probability of flipping "heads" is one-half. However, it is incorrect to take this law of averages with regard to a single criminal (or single coin-flip): the criminal is no more "a little bit guilty" than predicting a single coin flip to be "a little bit heads and a little bit tails": we are merely uncertain as to which it is. Expressing uncertainty as a numerical probability may be acceptable when making scientific measurements of physical quantities, but it is merely a mathematical model of the uncertainty we perceive in the context of "common sense" reasoning and logic. Just as in courtroom reasoning, the goal of employing [[uncertain inference]] is to gather evidence to strengthen the confidence of a proposition, as opposed to performing some sort of probabilistic entailment.

==Historical context== Historically, attempts to quantify probabilistic reasoning date back to antiquity. There was a particularly strong interest starting in the 12th century, with the work of the [[Scholastics]], with the invention of the [[half-proof]] (so that two half-proofs are sufficient to prove guilt), the elucidation of [[moral certainty]] (sufficient certainty to act upon, but short of absolute certainty), the development of [[Catholic probabilism]] (the idea that it is always safe to follow the established rules of doctrine or the opinion of experts, even when they are less probable), the [[case-based reasoning]] of [[casuistry]], and the scandal of [[Laxism]] (whereby probabilism was used to give support to almost any statement at all, it being possible to find an expert opinion in support of almost any proposition.).

==Modern proposals== Below is a list of proposals for probabilistic and evidentiary extensions to classical and [[predicate logic]].

- The term "''probabilistic logic''" was first used by [[John von Neumann]] in a series of [[Caltech]] lectures 1952 and 1956 paper "Probabilistic logics and the synthesis of reliable organisms from unreliable components", and subsequently in a paper by [[Nils Nilsson (researcher)|Nils Nilsson]] published in 1986, where the [[truth value]]s of sentences are [[probabilities]].{{cite journal | doi=10.1016/0004-3702(86)90031-7 | title=Probabilistic logic | date=1986 | last1=Nilsson | first1=Nils J. | journal=Artificial Intelligence | volume=28 | pages=71–87 }} The proposed semantical generalization induces a probabilistic logical [[entailment]], which reduces to ordinary logical [[entailment]] when the probabilities of all sentences are either 0 or 1. This generalization applies to any [[logical system]] for which the consistency of a finite set of sentences can be established.

- Gaifman{{Cite journal |last=Gaifman |first=Haim |date=1964 |title=Concerning measures in first order calculi |url=http://link.springer.com/10.1007/BF02759729 |journal=Israel Journal of Mathematics |language=en |volume=2 |issue=1 |pages=1–18 |doi=10.1007/BF02759729 |issn=0021-2172|url-access=subscription }} and Snir{{Cite journal |last1=Gaifman |first1=Haim |last2=Snir |first2=Marc |date=1982 |title=Probabilities over rich languages, testing and randomness |url=https://www.cambridge.org/core/product/identifier/S0022481200043942/type/journal_article |journal=Journal of Symbolic Logic |language=en |volume=47 |issue=3 |pages=495–548 |doi=10.2307/2273587 |jstor=2273587 |issn=0022-4812|url-access=subscription }} have developed a globally consistent and empirically satisfactory unification of classic probability theory and first-order logic that is suitable for inductive reasoning. Their theory assigns probabilities or degrees of beliefs to sentences consistent with the knowledge base (probability 1 for facts and axioms), consistent with the standard (Kolmogorov) [[probability axioms]] and [[logical deduction]], and allows ([[Bayesian inference|Bayesian]]) [[inductive reasoning]] and [[Language identification in the limit|learning in the limit]]. Most importantly, unlike most alternative proposals, it allows confirmation of [[universally quantified]] hypotheses. The theory has also been extended to higher-order logic.{{Cite journal |last1=Hutter |first1=Marcus |last2=Lloyd |first2=John W. |last3=Ng |first3=Kee Siong |last4=Uther |first4=William T.B. |date=2013 |title=Probabilities on Sentences in an Expressive Logic |url=https://linkinghub.elsevier.com/retrieve/pii/S157086831300013X |journal=Journal of Applied Logic |language=en |volume=11 |issue=4 |pages=386–420 |doi=10.1016/j.jal.2013.03.003|hdl=1885/14713 |hdl-access=free }} Both solutions are purely theoretical but have spawned practical approximations.{{cite arXiv | eprint=1609.03543 | last1=Garrabrant | first1=Scott | last2=Benson-Tilsen | first2=Tsvi | last3=Critch | first3=Andrew | last4=Soares | first4=Nate | last5=Taylor | first5=Jessica | title=Logical Induction | date=2016 | class=cs.AI }}

- The central concept in the theory of [[subjective logic]]A. Jøsang. ''[https://books.google.com/books?id=nqRlDQAAQBAJ Subjective Logic: A formalism for reasoning under uncertainty]''. Springer Verlag, 2016 is ''opinions'' about some of the [[propositional variable]]s involved in the given logical sentences. A binomial opinion applies to a single proposition and is represented as a 3-dimensional extension of a single probability value to express probabilistic and epistemic uncertainty about the truth of the proposition. For the computation of derived opinions based on a structure of argument opinions, the theory proposes respective operators for various logical connectives, such as e.g. multiplication ([[Logical conjunction|AND]]), comultiplication ([[Logical disjunction|OR]]), division (UN-AND) and co-division (UN-OR) of opinions,{{cite journal | doi=10.1016/j.ijar.2004.03.003 | title=Multiplication and comultiplication of beliefs | date=2005 | last1=Jøsang | first1=Audun | last2=McAnally | first2=David | journal=International Journal of Approximate Reasoning | volume=38 | pages=19–51 }} conditional deduction ([[Modus ponens|MP]]) and abduction ([[Modus tollens|MT]]).,{{cite journal |last1=Jøsang |first1=A. |title=Conditional Reasoning with Subjective Logic |url=http://eprints.qut.edu.au/14842/1/14842.pdf |journal=Journal of Multiple-Valued Logic and Soft Computing |date=2008 |volume=15 |issue=1 |pages=5–38}} as well as [[Bayes' theorem]].{{cite book |chapter-url=http://folk.uio.no/josang/papers/Josang2016-MFI.pdf |doi=10.1109/MFI.2016.7849531 |chapter=Generalising Bayes' theorem in subjective logic |title=2016 IEEE International Conference on Multisensor Fusion and Integration for Intelligent Systems (MFI) |date=2016 |last1=Josang |first1=Audun |pages=462–469 |isbn=978-1-4673-9708-7 }}

- The approximate reasoning formalism proposed by [[fuzzy logic]] can be used to obtain a logic in which the models are the probability distributions and the theories are the lower envelopes.{{cite journal | doi=10.1016/0004-3702(94)90102-3 | title=Inferences in probability logic | date=1994 | last1=Gerla | first1=Giangiacomo | journal=Artificial Intelligence | volume=70 | issue=1–2 | pages=33–52 }} In such a logic the question of the consistency of the available information is strictly related to that of the coherence of partial probabilistic assignment and therefore with [[Dutch book]] phenomena.

- [[Markov logic network]]s implement a form of uncertain inference based on the [[maximum entropy principle]]—the idea that probabilities should be assigned in such a way as to maximize entropy, in analogy with the way that [[Markov chain]]s assign probabilities to [[finite-state machine]] transitions.

- Systems such as [[Ben Goertzel]]'s [[Probabilistic Logic Network]]s (PLN) add an explicit confidence ranking, as well as a probability to [[atomic formula|atoms]] and sentences. The rules of deduction and induction incorporate this uncertainty, thus side-stepping difficulties in purely Bayesian approaches to logic (including Markov logic), while also avoiding the paradoxes of [[Dempster–Shafer theory]]. The implementation of PLN attempts to use and generalize algorithms from [[logic programming]], subject to these extensions.

- In the field of [[probabilistic argumentation]], various formal frameworks have been put forward. The framework of "probabilistic labellings",{{cite journal | doi=10.1007/s10472-018-9574-1 | title=A labelling framework for probabilistic argumentation | date=2018 | last1=Riveret | first1=Régis | last2=Baroni | first2=Pietro | last3=Gao | first3=Yang | last4=Governatori | first4=Guido | last5=Rotolo | first5=Antonino | last6=Sartor | first6=Giovanni | journal=Annals of Mathematics and Artificial Intelligence | volume=83 | pages=21–71 | hdl=11585/644602 | hdl-access=free }} for example, refers to probability spaces where a sample space is a set of labellings of [[argumentation framework|argumentation graphs]]. In the framework of "probabilistic argumentation systems"Kohlas, J., and Monney, P.A., 1995. ''[https://books.google.com/books?id=dqnwCAAAQBAJ A Mathematical Theory of Hints. An Approach to the Dempster–Shafer Theory of Evidence]''. Vol. 425 in Lecture Notes in Economics and Mathematical Systems. Springer Verlag.Haenni, R, 2005, "[http://www.sipta.org/isipta05/proceedings/papers/s007.pdf Towards a Unifying Theory of Logical and Probabilistic Reasoning]," ISIPTA'05, 4th International Symposium on Imprecise Probabilities and Their Applications: 193-202. {{cite web |url=http://www.iam.unibe.ch/~run/papers/haenni05d.pdf |title=Archived copy |accessdate=2006-06-18 |url-status=dead |archiveurl=https://web.archive.org/web/20060618205254/http://www.iam.unibe.ch/~run/papers/haenni05d.pdf |archivedate=2006-06-18 }} probabilities are not directly attached to arguments or logical sentences. Instead it is assumed that a particular subset W of the variables V involved in the sentences defines a [[probability space]] over the corresponding sub-[[σ-algebra]]. This induces two distinct probability measures with respect to V, which are called ''degree of support'' and ''degree of possibility'', respectively. Degrees of support can be regarded as non-additive ''probabilities of provability'', which generalizes the concepts of ordinary logical [[entailment]] (for V={}) and classical [[posterior probability|posterior probabilities]] (for V=W). Mathematically, this view is compatible with the [[Dempster–Shafer theory]].

- The theory of [[evidential reasoning approach|evidential reasoning]]{{cite journal | doi=10.1016/0888-613X(92)90033-V |doi-access=free | title=Understanding evidential reasoning | date=1992 | last1=Ruspini | first1=Enrique H. | last2=Lowrance | first2=John D. | last3=Strat | first3=Thomas M. | journal=International Journal of Approximate Reasoning | volume=6 | issue=3 | pages=401–424 }} also defines non-additive ''probabilities of probability'' (or ''epistemic probabilities'') as a general notion for both logical [[entailment]] (provability) and [[probability]]. The idea is to augment standard [[propositional logic]] by considering an epistemic operator '''K''' that represents the state of knowledge that a rational agent has about the world. Probabilities are then defined over the resulting ''epistemic universe'' '''K'''''p'' of all propositional sentences ''p'', and it is argued that this is the best information available to an analyst. From this view, [[Dempster–Shafer theory]] appears to be a generalized form of probabilistic reasoning.

==See also== {{Div col|colwidth=25em}}

- [[Statistical relational learning]]

- [[Bayesian inference]], [[Bayesian network]], [[Bayesian probability]]

- [[Cox's theorem]]

- [[Fréchet inequalities]]

- [[Imprecise probability]]

- [[Non-monotonic logic]]

- [[Possibility theory]]

- [[Probabilistic database]]

- [[Probabilistic soft logic]]

- [[Probabilistic causation]]

- [[Uncertain inference]]

- [[Upper and lower probabilities]] {{div col end}}

==References== {{reflist}}

==Further reading==

- Adams, E. W., 1998. ''[https://philpapers.org/rec/ADAAPO-2 A Primer of Probability Logic]''. CSLI Publications (Univ. of Chicago Press).

- Bacchus, F., 1990. "[https://dl.acm.org/citation.cfm?id=914650 Representing and reasoning with Probabilistic Knowledge. A Logical Approach to Probabilities]". The MIT Press.

- [[Rudolf Carnap|Carnap, R.]], 1950. ''Logical Foundations of Probability''. University of Chicago Press.

- [[Rolando Chuaqui|Chuaqui, R.]], 1991. ''[https://books.google.com/books?id=D5aCKWMtrtQC&dq=%22Truth%2C+Possibility+and+Probability%3A+New+Logical+Foundations+of+Probability+and+Statistical+Inference%22&pg=PP1 Truth, Possibility and Probability: New Logical Foundations of Probability and Statistical Inference]''. Number 166 in Mathematics Studies. North-Holland.

- Haenni, H., Romeyn, JW, Wheeler, G., and Williamson, J. 2011. ''Probabilistic Logics and Probabilistic Networks'', Springer.

- Hájek, A., 2001, "Probability, Logic, and Probability Logic," in Goble, Lou, ed., ''The Blackwell Guide to Philosophical Logic'', Blackwell.

- Jaynes, E., 1998, "Probability Theory: The Logic of Science", [http://bayes.wustl.edu/etj/prob/book.pdf pdf] and Cambridge University Press 2003.

- [[Henry E. Kyburg, Jr.|Kyburg, H. E.]], 1970. ''[https://philpapers.org/rec/KYBPAI Probability and Inductive Logic]'' Macmillan.

- Kyburg, H. E., 1974. ''[https://books.google.com/books?id=uFVtCQAAQBAJ&dq=%22The+Logical+Foundations+of+Statistical+Inference%22+kyburg&pg=PP8 The Logical Foundations of Statistical Inference]'', Dordrecht: Reidel.

- Kyburg, H. E. & C. M. Teng, 2001. ''[https://books.google.com/books?id=CicvkPZhohEC&dq=%22Uncertain+Inference%22+Teng&pg=PR11 Uncertain Inference]'', Cambridge: Cambridge University Press.

- Romeiyn, J. W., 2005. ''Bayesian Inductive Logic''. PhD thesis, Faculty of Philosophy, University of Groningen, Netherlands. [http://www.philos.rug.nl/~romeyn/paper/2005_romeijn_-_thesis.pdf]

- Williamson, J., 2002, "Probability Logic," in D. Gabbay, R. Johnson, H. J. Ohlbach, and J. Woods, eds., ''[https://books.google.com/books?id=c-bthgk3DQAC&dq=%22Handbook+of+the+Logic+of+Argument+and+Inference%3A+The+turn+towards+the+practical%22&pg=PP1 Handbook of the Logic of Argument and Inference: the Turn Toward the Practical]''. Elsevier: 397–424.

== External links ==

- [https://web.archive.org/web/20070930031527/http://www.kent.ac.uk/secl/philosophy/jw/2006/progicnet.htm ''Progicnet'': Probabilistic Logic And Probabilistic Networks]

- [https://folk.universitetetioslo.no/josang/sl/ Subjective logic demonstrations]

- [http://www.sipta.org/ ''The Society for Imprecise Probability'']

[[Category:Probabilistic arguments|logic]] [[Category:Non-classical logic]] [[Category:Scientific method]] [[Category:Formal epistemology]]

From MOAI Insights

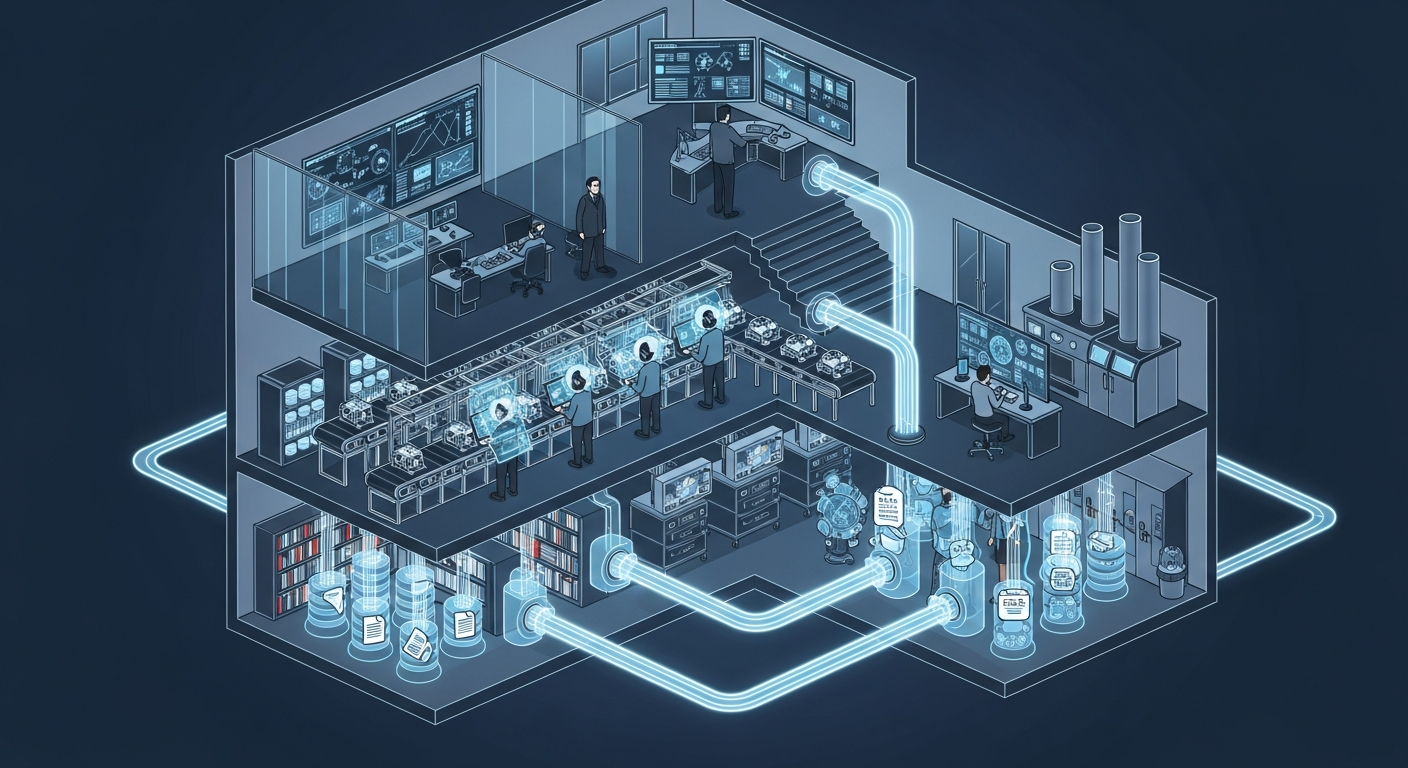

공장의 뇌는 어떻게 생겼는가 — 제조운영 AI 아키텍처 해부

지식관리, 업무자동화, 의사결정지원 — 따로 보면 다 있던 것들입니다. 제조 AI의 진짜 차이는 이 셋이 순환하면서 '우리 공장만의 지능'을 만든다는 데 있습니다.

그 30분을 18년 동안 매일 반복했습니다 — 품질팀장이 본 AI Agent

18년차 품질팀장이 매일 아침 30분씩 반복하던 데이터 분석을 AI Agent가 3분 만에 해냈습니다. 챗봇과는 완전히 다른 물건 — 직접 시스템에 접근해서 데이터를 꺼내고 분석하는 AI의 현장 도입기.

ERP 20년, 나는 왜 AI를 얹기로 했나

ERP 20년차 제조IT본부장의 고백: 3,200만 행의 데이터가 잠들어 있었다. ERP를 바꾸지 않고 AI를 얹자, 일주일 걸리던 불량 분석이 수 초로 줄었다.

Want to apply this in your factory?

MOAI helps manufacturing companies adopt AI tailored to their operations.

Talk to us →